Today, CNCF is publishing the second of our quarterly CNCF End User Technology Radars; the topic for this Technology Radar is observability.

In June, we launched the CNCF End User Technology Radar, a new initiative from the CNCF End User Community. This is a group of more than 140 top companies and startups who meet regularly to discuss challenges and best practices when adopting cloud native technologies. The goal of the CNCF End User Technology Radar is to share what tools are actively being used by end users, the tools they would recommend, and their patterns of usage. More information about the methodology can be found here.

We are also excited to launch radar.cncf.io, where you can find other Radars, the votes, and industries represented.

The survey on observability

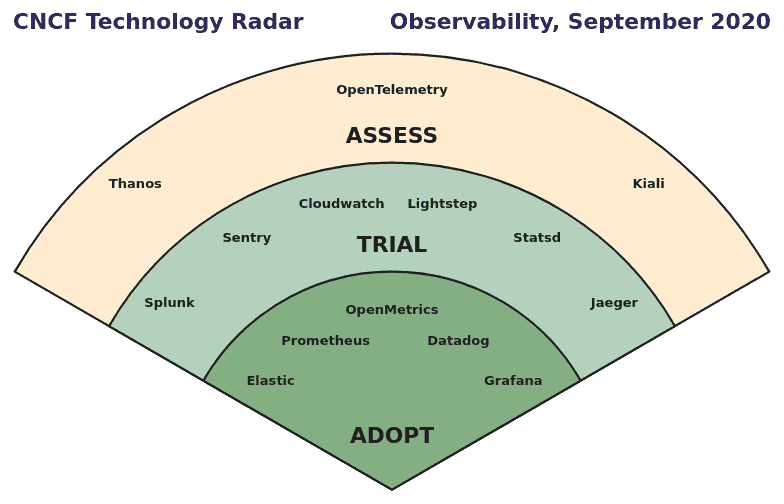

During August 2020, the members of the End User Community were asked which observability solutions they had assessed, trialed, and subsequently adopted. A total of 283 data points were sorted and reviewed to determine the final positions.

This may be read as:

- The five tools in the “Adopt” ring are widely adopted and recommended by the respondents.

- The technologies in “Trial” were recommended by a number of end users, but they either did not have enough total responses, or only received a few “Adopt” votes.

- Projects in “Assess” lack clear consensus. OpenTelemetry, Kiali, and Thanos have wide awareness, but only a few users recommend adoption. Organizations that are looking for new observability tools should take into account their own requirements when considering those in “Assess.”

The themes

The themes describe interesting patterns and editor observations:

- The most commonly adopted tools are open source. The three tools that received the most “Adopt” votes (Prometheus, Grafana, Elastic) and the five tools that received the most total votes (Prometheus, Grafana, Elastic, Jaeger, OpenTelemetry) are all open source.

It was interesting to see that companies have adopted and maintained these open source systems and been able to scale them to large enough deployments with in-house investments. As it requires at least a small team to deploy, maintain, and scale these open source systems, companies seem to think this tradeoff is worth it compared to using SaaS providers.

At the same time, there doesn’t seem to be a clear delineation between companies running open source tooling and those adopting an observability SaaS platform in terms of size or engineering capabilities. Open standards such as OpenMetrics and OpenTelemetry are being adopted regardless of whether companies are using open source or SaaS solutions. Plus, some of the companies that ultimately adopted SaaS platforms did go through the process of evaluating and prototyping self-managed platforms before deciding against it. Perhaps the conclusion to draw is that a quick pace of new technologies require new observability techniques, which in turn require almost constant evaluation and adoption of new tools. - There’s no consolidation in the observability space. Many companies are using multiple tools: Half of the companies are using 5 or more tools, and a third of them had experience with 10+ tools.

Observability inherently requires looking at the data from different views to try to answer questions. Different tools have strengths in different techniques and integrations, which may be the reason why end users end up with multiple tools. Once adopted, it may be difficult to switch from one set of tools to another or to even consolidate. For most end users, observability is not their core business, so the investment needed to switch tools is often not easily funded. This may be a large reason why there are so many “Adopt” votes in this radar.

Anecdotally, companies are constantly experimenting with and introducing new tools, looking for better ways to observe things. With the advent of cloud native technologies like Kubernetes, different tools are needed for monitoring. For example, Nagios was really popular five years ago, but not as relevant now for users who need to monitor Kubernetes workloads.

- Prometheus and Grafana are frequently used together. Two-thirds of the respondents are using these two tools in tandem. This comes as no surprise, but the high correlation is still interesting to note. Momentum behind both projects, coupled with little competition, could have helped them gain such a high rate of adoption. Plus, there are many tutorials and installers that make it easy to use them together. There’s minimal resistance to using them hand-in-hand.

The editors

Jon Moter is Senior Principal Engineer at Zendesk. Jon works in the Foundation Engineering organization, which provides compute, storage, and cloud infrastructure to the rest of Zendesk engineering. Twitter: @jonmoter

Kunal Parmar is Director of Software Development at Box. Kunal leads their cloud native team, driving the adoption of Kubernetes, service mesh, and observability.

Marcin Suterski is Lead Engineer at The New York Times. Marcin is part of the Delivery Engineering team, which provides tools, processes and education to engineering teams across the organization. His current focus is on observability.

Jason Tarasovic is Principal Engineer at PayIt. Jason was the founding engineer for the Platform Engineering team, where he was responsible for building and running their cloud native platform. Twitter: @J_Tarasovic

Read more

Case studies: Read how Uber, Adform and Grafana Labs are handling observability using CNCF technologies.

What’s next

The next CNCF End User Technology Radar is targeted for December 2020, focusing on a different topic in cloud native. Vote to help decide the topic for the next CNCF End User Technology Radar.

Join the CNCF End User Community to:

- Find out who exactly is using each project and read their comments

- Contribute to and edit future CNCF End User Technology Radars.

We are excited to provide this report to the community, and we’d love to hear what you think. Email feedback to info@cncf.io.

About the methodology

In August 2020, the 140 companies in the CNCF End User Community were asked to describe what their companies recommended for different solutions: Hold, Assess, Trial, or Adopt. They could also give more detailed comments. As the answers were submitted via a Google spreadsheet, they were neither private nor anonymized within the group.

A total of 32 companies submitted 283 data points on 34 solutions. These were sorted in order to determine the final positions. Finally, the themes were written to reflect broader patterns, in the opinion of the editors.