At CNCF TAG Developer Experience, we recently set out to understand how Artificial Intelligence is shaping open-source development. The response from the community has been impressive in its scale, with nearly half of our initial responses arriving within the first week alone. This immediate engagement highlights the urgency of the topic and the community’s desire for shared guidelines for AI-assisted development.

The data collected from 133 respondents so far represents nearly 100 unique projects, giving us confidence that these findings reflect the cloud-native ecosystem at large rather than a narrow subset of projects. This article serves as a sneak peek into our initial findings; a more comprehensive analysis will follow as we process further data.

Who is answering?

The feedback primarily reflects the perspectives of those on the front lines. The vast majority of participants are code-centric contributors focusing on submission, CI/CD, and infrastructure, while approximately 20% combine engineering with critical roles like release management and documentation.

How maintainers use AI: Tools and workflows

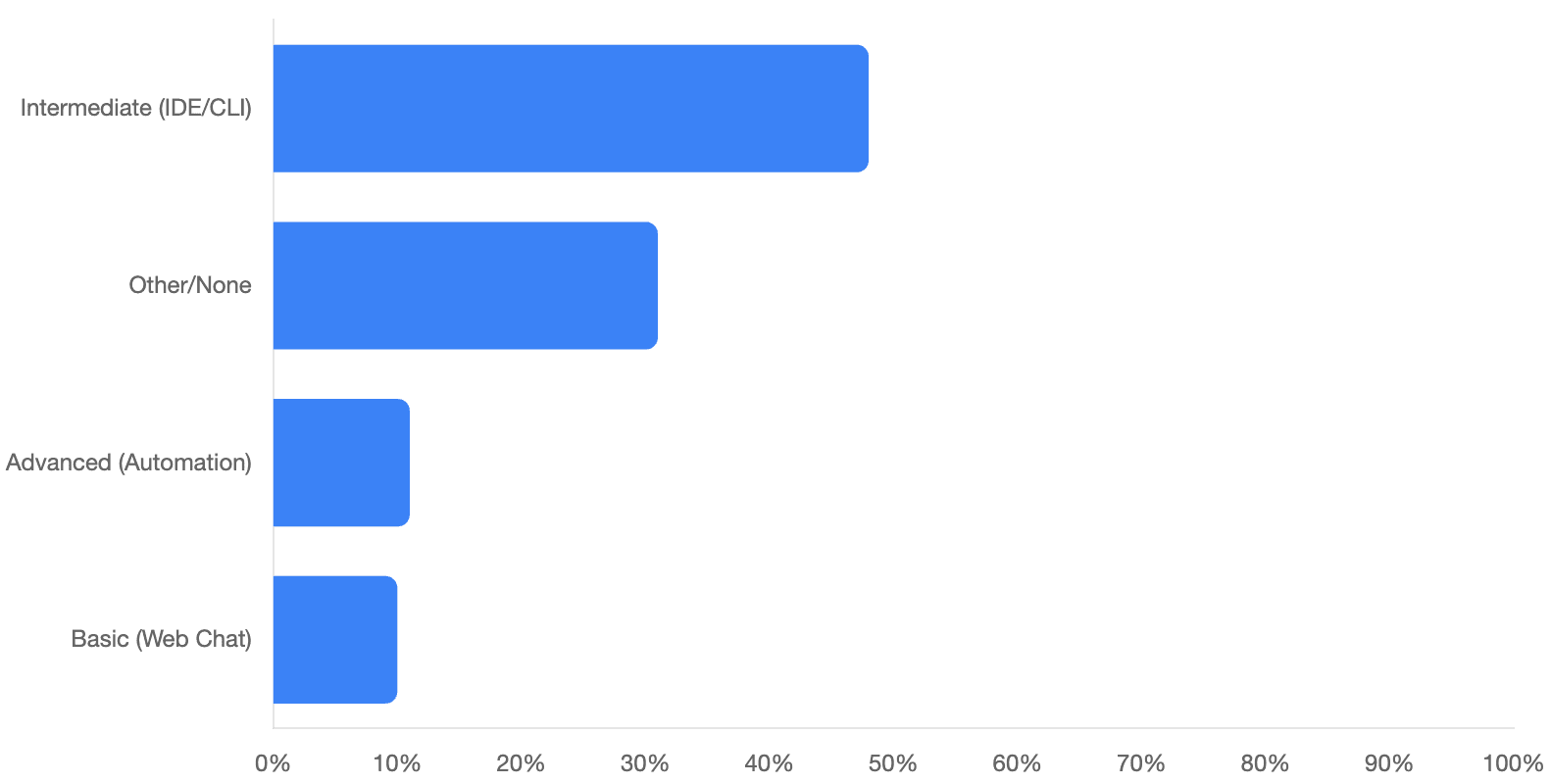

Modern AI tools have moved beyond simple web chatbots and are now deeply integrated into daily routines. Nearly half of respondents actively use AI assistants directly within their IDEs or command-line interfaces.

When it comes to tooling, Claude Code and Github Copilot emerge as clear leaders in the space. Interestingly, only a small fraction (roughly 10%) of contributors still rely on basic chatbots via manual copy-pasting. Meanwhile, a similar percentage of advanced users has already moved toward “high-level integration,” where AI is built directly into project automation for PR reviews and issue triaging.

Where AI helps the most

Contributors are seeing the most significant boosts in productivity within a few specific areas:

- Writing and refactoring code.

- Improving documentation and debugging.

- Understanding unfamiliar codebases.

- Analyzing Pull Requests.

The high ranking of “understanding the codebase” suggests that AI is acting as a knowledgeable guide, helping developers navigate the inherent complexity of large-scale projects.

The gap between AI use and official policies

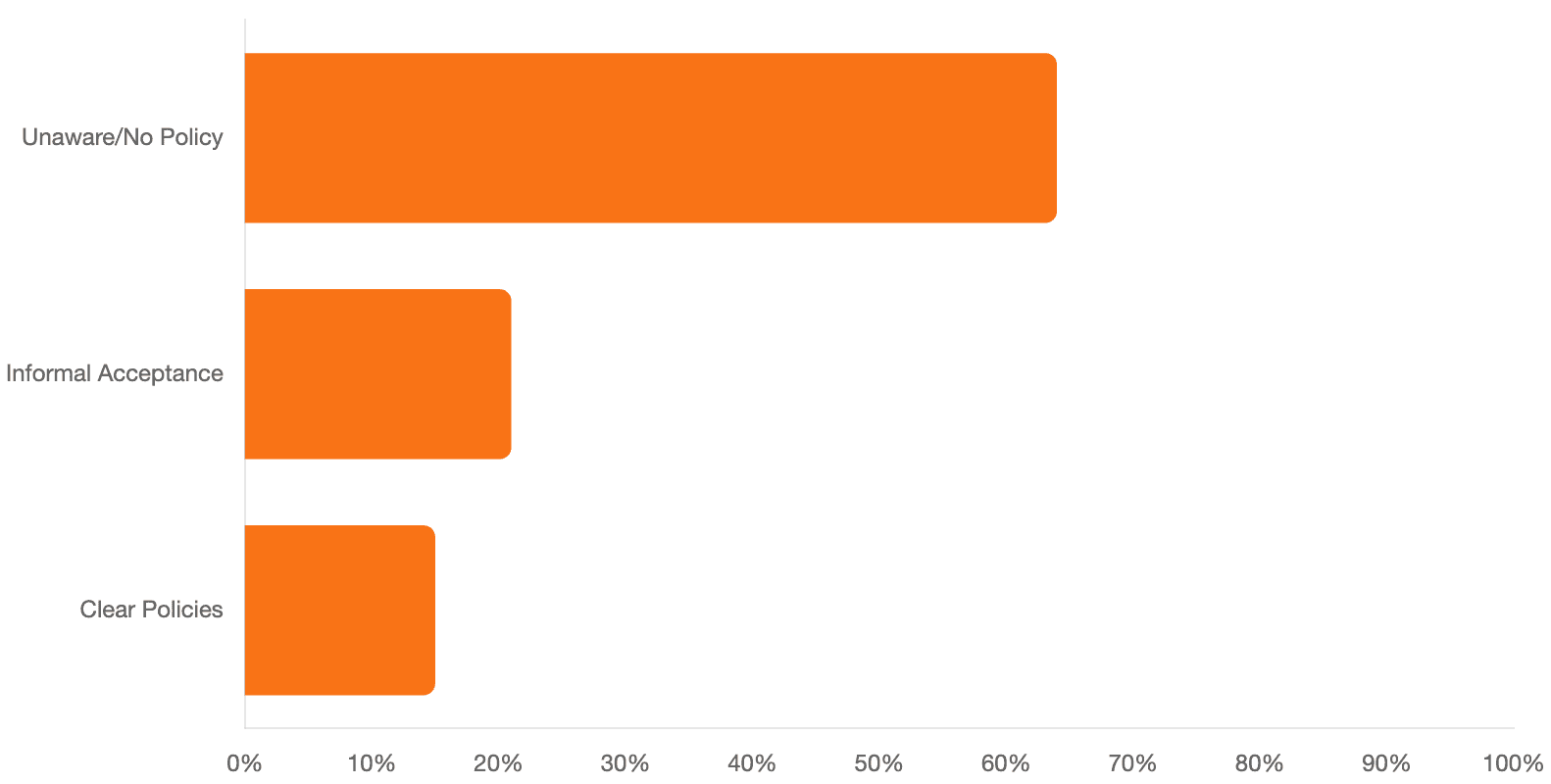

One of our most striking findings is the disconnect between individual AI usage and formal project governance. While local AI use is widespread, the adoption of official policies has lagged behind.

Roughly two-thirds of respondents are either unaware of any specific AI guidelines or confirmed that no official policies exist in their main repositories. Furthermore, the vast majority of projects make no mention of AI usage in their public-facing documentation or contributing guides. While a few pioneering projects are setting the pace with clear policies, the ecosystem is largely operating in an environment that is still figuring out how to govern automated code generation.

Community sentiment and code reviews

Despite the lack of formal rules, the general “vibe” toward AI is open and accepting. Roughly one-third of contributors noted that AI usage is generally allowed. Conversely, only a tiny minority (less than 4%) reported that AI usage is explicitly prohibited in their environments.

This pragmatic approach extends to how maintainers handle AI-generated contributions:

- A solid majority follow their standard review process without applying special filters.

- Over a quarter of maintainers prefer a collaborative approach, asking contributors to refine AI-generated code to meet quality standards rather than rejecting it.

- Only a nominal percentage automatically reject suspected AI PRs.

Top concerns and the call for transparency

While the community is optimistic, several valid concerns remain. Maintainers are particularly worried about:

- Security vulnerabilities

- License compliance.

- The burden on reviewers, caused by a potential flood of low-effort PRs.

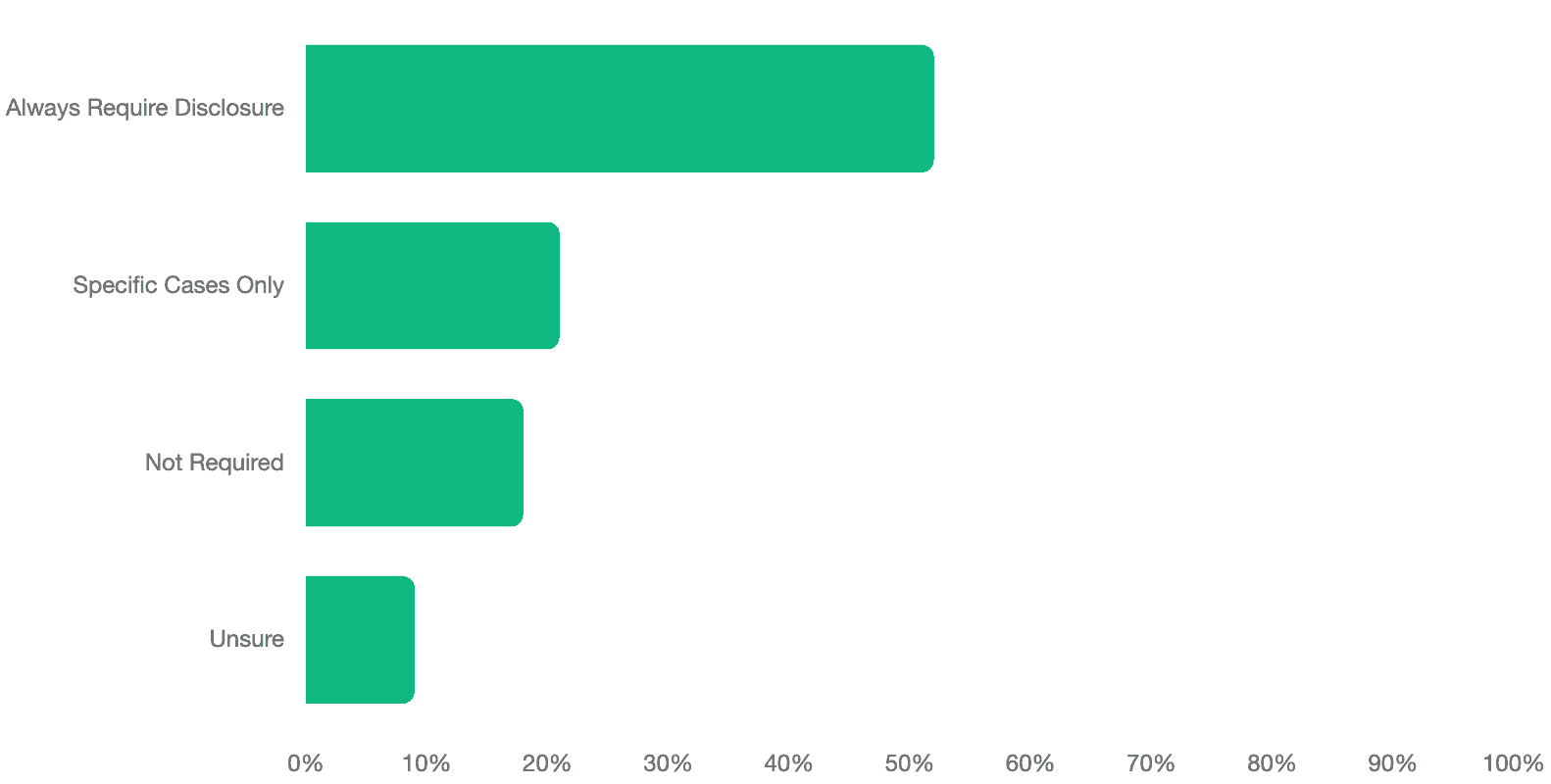

To mitigate these risks, there is a strong collective desire for visibility. Over half of respondents believe that AI-assisted contributions should always require formal disclosure (such as an “AI-authored” tag). An additional 20% feel disclosure should be required in specific cases. This suggests that while maintainers are willing to accept AI-generated code, they want the transparency necessary to adjust their review efforts accordingly.

Wrapping up

This first batch of data confirms that AI integration is no longer a trend, it is a core part of the modern workflow. As we move forward, the challenge for the cloud-native community will be balancing this new productivity with the high standards of security and manual oversight that enterprise-grade open source requires.

We aren’t finished yet! To ensure our final report truly reflects the diverse cloud-native landscape, we need your voice. The survey will remain open until Monday, May 18 (End of Day, Anywhere on Earth).If you haven’t shared your experience yet, we invite you to contribute to the survey and help us build a more accurate and comprehensive picture of AI’s role in our community.