Workiva: Using OpenTracing to help pinpoint the bottlenecks

Challenge

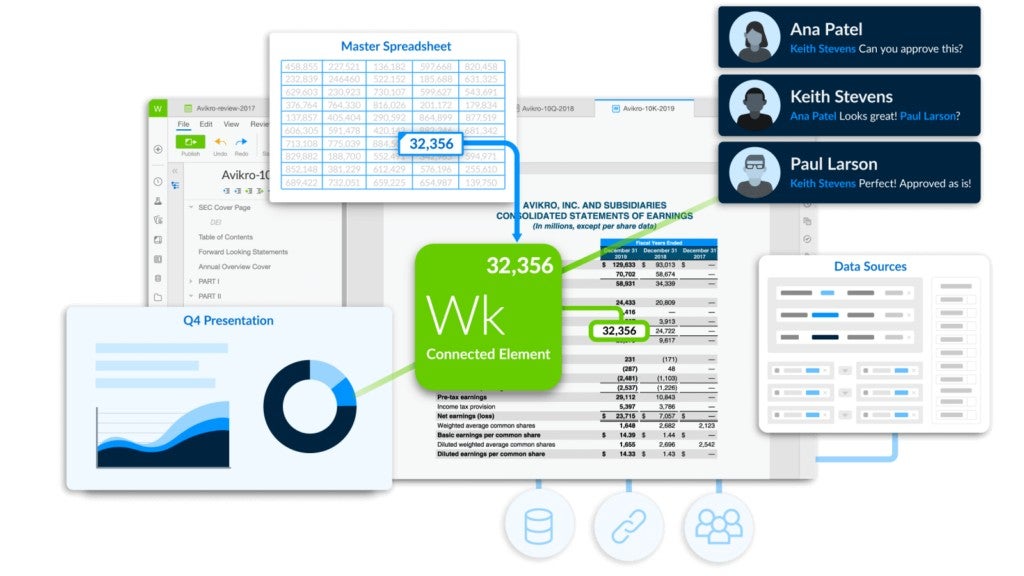

Workiva offers a cloud-based platform for managing and reporting business data, Wdesk. As the company made the shift from a monolith to a more distributed, microservice-based system, “we had a number of people working on this, all on different teams, so we needed to identify what the issues were and where the bottlenecks were,” says Senior Software Architect MacLeod Broad. With back-end code running on Google App Engine, Google Compute Engine, as well as Amazon Web Services, Workiva needed a tracing system that was agnostic of platform.

Solution

Broad’s team introduced the platform-agnostic distributed tracing system OpenTracing to help them pinpoint the bottlenecks.

Impact

OpenTracing produced immediate results, Software Engineer Michael Davis reports: “Tracing has given us immediate, actionable insight into how to improve our service. Through a combination of seeing where each call spends its time, as well as which calls are most often used, we were able to reduce our average response time by 95 percent (from 600ms to 30ms) in a single fix.”

By the numbers

Average response time

Reduced 95%

Customer base

More than 70% of the Fortune 500

One internal library for logging, telemetry, analytics, and tracing

Last fall, MacLeod Broad’s platform team at Workiva was prepping one of the company’s first products utilizing Amazon Web Services when they ran into a roadblock.

Early on, Workiva’s backend had run mostly on Google App Engine. But things changed along the way as Workiva’s SaaS offering, Wdesk, a cloud-based platform for managing and reporting business data, grew its customer base to more than 70 percent of the Fortune 500 companies. “As customer needs grew and the product offering expanded, we started to leverage a wider offering of services such as Amazon Web Services as well as other Google Cloud Platform services, creating a multi-vendor environment.”

With this new product, there was a “sync and link” feature by which data “went through a whole host of services starting with the new spreadsheet system [Amazon Aurora] into what we called our linking system, and then pushed through http to our existing system, and then a number of calculations would go on, and the results would be transmitted back into the new system,” says Broad. “We were trying to optimize that for speed. We thought we had made this great optimization and then it would turn out to be a micro optimization, which didn’t really affect the overall speed of things.”

The challenges faced by Broad’s team may sound familiar to other companies that have also made the shift from monoliths to more distributed, microservice-based systems. “We had a number of people working on this, all on different teams, so it was difficult to get our head around what the issues were and where the bottlenecks were,” says Broad.

“Each service team was going through different iterations of their architecture and it was very hard to follow what was actually going on in each teams’ system,” he adds. “We had circular dependencies where we’d have three or four different service teams unsure of where the issues really were, requiring a lot of back and forth communication. So we wasted a lot of time saying, ‘What part of this is slow? Which part of this is sometimes slow depending on the use case? Which part is degrading over time? Which part of this process is asynchronous so it doesn’t really matter if it’s long-running or not? What are we doing that’s redundant, and which part of this is buggy?’”

Simply put, it was an ideal use case for tracing. “A tracing system can at a glance explain an architecture, narrow down a performance bottleneck and zero in on it, and generally just help direct an investigation at a high level,” says Broad. “Being able to do that at a glance is much faster than at a meeting or with three days of debugging, and it’s a lot faster than never figuring out the problem and just moving on.”

With Workiva’s back-end code running on Google Compute Engine as well as App Engine and AWS, Broad knew that he needed a tracing system that was platform agnostic. “We were looking at different tracing solutions,” he says, “and we decided that because it seemed to be a very evolving market, we didn’t want to get stuck with one vendor. So OpenTracing seemed like the cleanest way to avoid vendor lock-in on what backend we actually had to use.”

Once they introduced OpenTracing into this first use case, Broad says, “The trace made it super obvious where the bottlenecks were.” Even though everyone had assumed it was Workiva’s existing code that was slowing things down, that wasn’t exactly the case. “It looked like the existing code was slow only because it was reaching out to our next-generation services, and they were taking a very long time to service all those requests,” says Broad. “On the waterfall graph you can see the exact same work being done on every request when it was calling back in. So every service request would look the exact same for every response being paged out. And then it was just a no-brainer of, ‘Why is it doing all this work again?’”

Using the insight OpenTracing gave them, “My team was able to look at a trace and make optimization suggestions to another team without ever looking at their code,” says Broad. “The way we named our traces gave us insight whether it’s doing a SQL call or it’s making an RPC. And so it was really easy to say, ‘OK, we know that it’s going to page through all these requests. Do the work once and stuff it in cache.’ And we were done basically. All those calls became sub-second calls immediately.”

“With OpenTracing, my team was able to look at a trace and make optimization suggestions to another team without ever looking at their code.”

— MACLEOD BROAD, SENIOR SOFTWARE ARCHITECT AT WORKIVA

After the success of the first use case, everyone involved in the trial went back and fully instrumented their products. Tracing was added to a few more use cases. “We wanted to get through the initial implementation pains early without bringing the whole department along for the ride,” says Broad. “Now, a lot of teams add it when they’re starting up a new service. We’re really pushing adoption now more than we were before.”

Some teams were won over quickly. “Tracing has given us immediate, actionable insight into how to improve our [Workspaces] service,” says Software Engineer Michael Davis. “Through a combination of seeing where each call spends its time, as well as which calls are most often used, we were able to reduce our average response time by 95 percent (from 600ms to 30ms) in a single fix.”

Most of Workiva’s major products are now traced using OpenTracing, with data pushed into Google StackDriver. Even the products that aren’t fully traced have some components and libraries that are.

Broad points out that because some of the engineers were working on App Engine and already had experience with the platform’s Appstats library for profiling performance, it didn’t take much to get them used to using OpenTracing. But others were a little more reluctant. “The biggest hindrance to adoption I think has been the concern about how much latency is introducing tracing [and StackDriver] going to cost,” he says. “People are also very concerned about adding middleware to whatever they’re working on. Questions about passing the context around and how that’s done were common. A lot of our Go developers were fine with it, because they were already doing that in one form or another. Our Java developers were not super keen on doing that because they’d used other systems that didn’t require that.”

But the benefits clearly outweighed the concerns, and today, Workiva’s official policy is to use tracing.” In fact, Broad believes that tracing naturally fits in with Workiva’s existing logging and metrics systems. “This was the way we presented it internally, and also the way we designed our use,” he says. “Our traces are logged in the exact same mechanism as our app metric and logging data, and they get pushed the exact same way. So we treat all that data exactly the same when it’s being created and when it’s being recorded. We have one internal library that we use for logging, telemetry, analytics and tracing.”

“A tracing system can at a glance explain an architecture, narrow down a performance bottleneck and zero in on it, and generally just help direct an investigation at a high level. Being able to do that at a glance is much faster than at a meeting or with three days of debugging, and it’s a lot faster than never figuring out the problem and just moving on.”

— MACLEOD BROAD, SENIOR SOFTWARE ARCHITECT AT WORKIVA

For Workiva, OpenTracing has become an essential tool for zeroing in on optimizations and determining what’s actually a micro-optimization by observing usage patterns. “On some projects we often assume what the customer is doing, and we optimize for these crazy scale cases that we hit 1 percent of the time,” says Broad. “It’s been really helpful to be able to say, ‘OK, we’re adding 100 milliseconds on every request that does X, and we only need to add that 100 milliseconds if it’s the worst of the worst case, which only happens one out of a thousand requests or one out of a million requests.”

Unlike many other companies, Workiva also traces the client side. “For us, the user experience is important—it doesn’t matter if the RPC takes 100 milliseconds if it still takes 5 seconds to do the rendering to show it in the browser,” says Broad. “So for us, those client times are important. We trace it to see what parts of loading take a long time. We’re in the middle of working on a definition of what is ‘loaded.’ Is it when you have it, or when it’s rendered, or when you can interact with it? Those are things we’re planning to use tracing for to keep an eye on and to better understand.”

That also requires adjusting for differences in external and internal clocks. “Before time correcting, it was horrible; our traces were more misleading than anything,” says Broad. “So we decided that we would return a timestamp on the response headers, and then have the client reorient its time based on that—not change its internal clock but just calculate the offset on the response time to when the client got it. And if you end up in an impossible situation where a client RPC spans 210 milliseconds but the time on the response time is outside of that window, then we have to reorient that.”

Broad is excited about the impact OpenTracing has already had on the company, and is also looking ahead to what else the technology can enable. One possibility is using tracing to update documentation in real time. “Keeping documentation up to date with reality is a big challenge,” he says. “Say, we just ran a trace simulation or we just ran a smoke test on this new deploy, and the architecture doesn’t match the documentation. We can find whose responsibility it is and let them know and have them update it. That’s one of the places I’d like to get in the future with tracing.”