Part I: Architecture and Implementation

In production Kubernetes clusters, pulling container images from private registries happens thousands of times per day. Kubernetes distributions from major cloud vendors provide credential providers for their respective registries like AWS ECR, Google GCR, and Azure ACR. However, the problem emerges when you need to authenticate to private registry mirrors or pull-through caches. This is particularly common in air-gapped environments where organizations run their own mirror registries to reduce egress costs, improve performance, or meet compliance requirements.

Traditional approaches to mirror authentication require node-level configuration and direct access to nodes. This means credentials must be configured globally at the node level, shared across all namespaces. This breaks tenant isolation and violates the principle of least privilege.

The CRI-O project provides a credential provider that solves this problem by enabling authentication for registry mirrors using standard Kubernetes Secrets. This approach maintains security boundaries while preserving the performance benefits of registry mirrors.

Prerequisites

- Kubernetes 1.33 or later

- CRI-O 1.34 or later

KubeletServiceAccountTokenForCredentialProvidersfeature gate enabled- The credential provider binary installed on all nodes

The problem with node-level credentials

Traditional container registry authentication in Kubernetes has a fundamental limitation when working with private registry mirrors. The kubelet itself has no knowledge of mirror configuration. Mirrors are configured at the container runtime level through files like /etc/containers/registries.conf. There are currently no Kubernetes enhancement proposals to change this architecture, so mirror configuration remains outside the Kubernetes API.

This creates a technical problem: while you can use namespace-scoped secrets with imagePullSecrets for pulling from source registries, this doesn’t work when you want to use private mirrors or pull-through caches. Mirrors require node-level configuration, forcing you to use global credentials. This leads to three significant issues:

1. Security isolation is broken. When credentials are configured globally at the node level, they become accessible across all namespaces. A compromised pod in namespace A can potentially access credentials intended for namespace B. This violates basic security boundaries.

2. Operational complexity increases. Cluster administrators must manage credentials centrally, creating bottlenecks. Teams lose autonomy and must coordinate with platform teams for every credential update.

3. Compliance concerns arise. In regulated environments, credential sharing across project boundaries violates security policies. Audit trails become murky when credentials aren’t scoped to specific namespaces.

This is particularly problematic in multi-tenant platforms where different teams need isolated access to their respective private registries. The false choice between using mirrors for performance and maintaining security isolation is not acceptable in production environments.

The solution: Kubelet credential provider plugins

Kubernetes introduced the kubelet image credential provider plugin API in version 1.20 and graduated it to stable in version 1.26. This API allows external executables to provide registry credentials dynamically, replacing the need for static node-level configuration.

The plugin model works through standard input and output (stdin/stdout). When the kubelet needs to pull an image, it invokes the plugin binary, passing a CredentialProviderRequest via stdin. The plugin returns a CredentialProviderResponse via stdout containing authentication credentials or, in the case of the CRI-O credential provider, signals that authentication is handled through alternative means.

Kubelet configuration requirements

Two kubelet flags enable credential provider plugins:

--image-credential-provider-config:Absolute path to the configuration file.--image-credential-provider-bin-dir:Directory containing plugin binaries referenced by their name.

The configuration file specifies which plugins handle which image patterns. Starting with Kubernetes 1.33, the KubeletServiceAccountTokenForCredentialProviders feature gate enables the kubelet to pass service account tokens to credential provider plugins. This is required for the CRI-O credential provider to function, as it needs the token to extract the namespace and authenticate to the Kubernetes API using the pod’s own identity.

A complete configuration looks like this:

apiVersion: kubelet.config.k8s.io/v1

kind: CredentialProviderConfig

providers:

- name: crio-credential-provider

matchImages:

- docker.io

- quay.io

defaultCacheDuration: "1s"

apiVersion: credentialprovider.kubelet.k8s.io/v1

tokenAttributes:

serviceAccountTokenAudience: https://kubernetes.default.svc

cacheType: "Token"

requireServiceAccount: false

The tokenAttributes section specifies how service account tokens are handled:

serviceAccountTokenAudience:Specifies who the token is intended for (typically the Kubernetes API server URL). The API server validates this matches before accepting the token.cacheType:Determines caching scope (Token for per-token caching or ServiceAccount for per-service-account caching)requireServiceAccount:Whether the plugin requires the pod to have a service account. The kubelet passes the token from either the pod’s explicitly specified service account or the default service account for the namespace.

This configuration enables namespace-scoped credential retrieval without requiring node-level permissions.

How the CRI-O Credential Provider Works

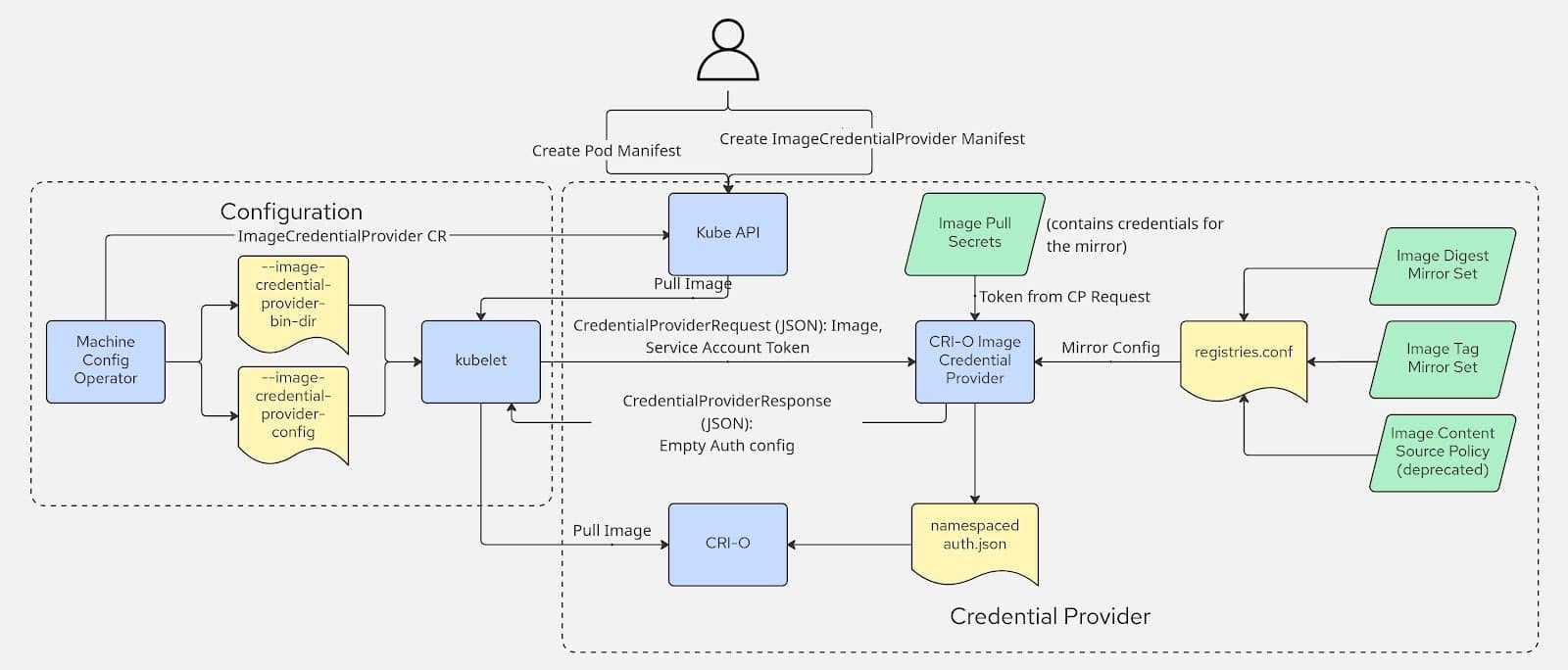

The CRI-O credential provider implements a workflow that extracts namespace information from service account tokens, discovers registry mirrors, retrieves secrets from that namespace, and generates short-lived authentication files consumed by CRI-O.

Simplified workflow

- Kubelet invokes the credential provider with an image name and service account token

- The credential provider:

- Parses the JSON Web Token (JWT) to extract the namespace

- Discovers configured mirrors from local configuration

- Queries the Kubernetes API for all registry secrets in the namespace

- Matches secrets against the mirrors and source image

- Generates a short-lived auth file for CRI-O

- Returns an empty success response to kubelet

- CRI-O uses the auth file for mirror authentication and cleans it up after the image pull

Complete workflow with component interactions

The diagram illustrates the complete interaction between components. When an image pull request arrives, the kubelet invokes the credential provider as an external executable, passing both the image reference and the pod’s service account token. The credential provider acts as a bridge between Kubernetes (where secrets are stored) and CRI-O (where mirrors are configured).

Token parsing and discovery

The provider parses the JWT token to extract the namespace from the kubernetes.io claim without validating the token signature (validation happens when the token is used to call the Kubernetes API). Using this namespace information, it queries the Kubernetes API for all dockerconfigjson type secrets and reads the mirror configuration from the container runtime’s /etc/containers/registries.conf file.

Secret retrieval and credential matching

The credential provider uses the pod’s service account token to authenticate to the Kubernetes API and retrieve secrets from the pod’s namespace. This requires RBAC permissions for the service account to list and get secrets, plus cluster-level permissions for nodes to use credential providers.

It decodes each dockerconfigjson secret from base64, extracts the registry credentials, and normalizes registry URLs by stripping http:// and https:// prefixes. It then uses prefix matching to identify which credentials apply: a mirror URL like quay.io matches secrets for both quay.io and quay.io/myorg. Multiple secrets in the same namespace are all merged together.

The provider also reads the global kubelet auth file at /var/lib/kubelet/config.json if it exists and merges it with the namespace secrets, with namespace credentials taking precedence over global ones.

Auth file generation and cleanup

The auth file is written to /etc/crio/auth/<namespace>-<sha256(image)>.json where the SHA256 hash of the image reference ensures a unique filename that prevents collisions between concurrent pulls of different images. CRI-O discovers this file through the naming convention, uses it to authenticate against the mirror registries during the image pull, and cleans it up after the pull completes. The provider returns an empty success response to the kubelet, signaling that authentication is handled.

This architecture maintains separation of concerns: Kubernetes manages secrets and identity, the container runtime manages mirror configuration, and the credential provider bridges the gap between them.

The implementation includes several performance optimizations: buffer pooling to reduce garbage collection pressure, streaming JSON parsing to avoid reading all input into memory, pre-allocated data structures to minimize allocations, and early loop exits when credentials are found. These optimizations matter because the credential provider executes for every image pull that matches the configured matchImages patterns. In a busy cluster pulling from configured registries, this can mean hundreds or thousands of invocations per hour.

A real world example

Let’s walk through a concrete example with real configuration files. The credential provider binary can be downloaded from the releases or built from the repository and installed on all nodes in the directory specified by –image-credential-provider-bin-dir.

Configure kubelet

Configure the kubelet to use the credential provider by creating

/etc/kubernetes/credential-providers/config.yaml:

apiVersion: kubelet.config.k8s.io/v1

kind: CredentialProviderConfig

providers:

- name: crio-credential-provider

matchImages:

- docker.io

defaultCacheDuration: "1s"

apiVersion: credentialprovider.kubelet.k8s.io/v1

tokenAttributes:

serviceAccountTokenAudience: https://kubernetes.default.svc

cacheType: "Token"

requireServiceAccount: false

Note: The defaultCacheDuration and cacheType fields are required by the API but don’t currently affect behavior since the CRI-O credential provider doesn’t support credential caching. Auth files are generated fresh for each image pull.

Add the following kubelet flags to reference this configuration:

--image-credential-provider-config=/etc/kubernetes/credential-providers/config.yaml

--image-credential-provider-bin-dir=/usr/libexec/kubernetes/credential-providers

--feature-gates=KubeletServiceAccountTokenForCredentialProviders=true

Set up registry mirror

Set up a private registry mirror on the node. Start a local registry at localhost:5000 with basic authentication:

# Create htpasswd file with credentials (username: myuser, password: mypassword)

mkdir -p /tmp/registry/auth

podman run --rm --entrypoint htpasswd httpd:2 -Bbn myuser mypassword > /tmp/registry/auth/htpasswd

# Start the registry with authentication

podman run -d -p 5000:5000 \

--name registry \

-v /tmp/registry/auth:/auth \

-e REGISTRY_AUTH=htpasswd \

-e REGISTRY_AUTH_HTPASSWD_REALM="Registry Realm" \

-e REGISTRY_AUTH_HTPASSWD_PATH=/auth/htpasswd \

docker.io/library/registry:2

Push a test image to the mirror:

# Log in to the local registry

podman login localhost:5000 -u myuser -p mypassword

# Pull and push a test image

podman pull docker.io/library/nginx:latest

podman tag docker.io/library/nginx:latest localhost:5000/library/nginx:latest

podman push localhost:5000/library/nginx:latest

Configure mirror in CRI-O

Configure this registry as a mirror for docker.io in /etc/containers/registries.conf. When CRI-O attempts to pull an image from docker.io, it will first try to pull from the mirror:

unqualified-search-registries = ["docker.io"]

[[registry]]

location = "docker.io"

[[registry.mirror]]

location = "localhost:5000"

insecure = true

Create registry credentials secret

Create a namespace secret containing credentials for authenticating to the mirror registry. The credential provider will retrieve this secret from the default namespace and use it to generate the auth file:

apiVersion: v1

kind: Secret

type: kubernetes.io/dockerconfigjson

metadata:

name: my-secret

namespace: default

data:

.dockerconfigjson: eyJhdXRocyI6eyJodHRwOi8vbG9jYWxob3N0OjUwMDAiOnsidXNlcm5hbWUiOiJteXVzZXIiLCJwYXNzd29yZCI6Im15cGFzc3dvcmQiLCJhdXRoIjoiYlhsMWMyVnlPbTE1Y0dGemMzZHZjbVE9In19fQo=

The base64-encoded .dockerconfigjson data decodes to:

{

"auths": {

"http://localhost:5000": {

"username": "myuser",

"password": "mypassword",

"auth": "bXl1c2VyOm15cGFzc3dvcmQ="

}

}

}

Configure RBAC

Configure RBAC to allow the pod’s service account to read secrets in its namespace. The credential provider uses the pod’s service account token to authenticate to the Kubernetes API and retrieve registry secrets. Without these permissions, the API will reject the credential provider’s request to list and get secrets.

Cluster-level RBAC

Create cluster-level RBAC to allow nodes to use the credential provider:

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: node-credential-providers

rules:

- apiGroups: [""]

resources: ["serviceaccounts"]

verbs: ["get", "list"]

- apiGroups: [""]

resources: ["*"]

verbs: ["request-serviceaccounts-token-audience"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: node-credential-providers-binding

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: node-credential-providers

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: system:node:your-node-name

The system:node:your-node-name subject must match the exact node identity. You need to create a ClusterRoleBinding for each node in your cluster, or add multiple subjects to a single binding.

Namespace-level RBAC

Create namespace-level RBAC to allow service accounts to read secrets:

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: secrets-role

namespace: default

rules:

- apiGroups: [""]

resources: ["secrets"]

verbs: ["get", "list"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: secrets-role-binding

namespace: default

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: secrets-role

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: system:serviceaccount:default:default

Running the example

Create a file named pod.yaml that defines a pod pulling the nginx image from docker.io:

apiVersion: v1

kind: Pod

metadata:

name: nginx

namespace: default

spec:

containers:

- name: nginx

image: docker.io/nginx

Deploy the pod:

kubectl apply -f pod.yaml

When the pod is created and the kubelet attempts to pull the image, the credential provider is invoked. The provider logs (available via journald) show the complete flow:

Running credential provider

Reading from stdin

Parsed credential provider request for image "docker.io/library/nginx"

Parsing namespace from request

Matching mirrors for registry config: /etc/containers/registries.conf

Got mirror(s) for "docker.io/library/nginx": "localhost:5000"

Getting secrets from namespace: default

Unable to find env file "/etc/kubernetes/apiserver-url.env", using default API server host: localhost:6443

Got 1 secret(s)

Parsing secret: my-secret

Found docker config JSON auth in secret "my-secret" for "http://localhost:5000"

Checking if mirror "localhost:5000" matches registry "localhost:5000"

Using mirror auth "localhost:5000" for registry from secret "localhost:5000"

Wrote auth file to /etc/crio/auth/default-7e59ad64326bc321517fb6fc6586de5ee149178394d9edfa2a877176cdf6fad5.json with 1 number of entries

Auth file path: /etc/crio/auth/default-7e59ad64326bc321517fb6fc6586de5ee149178394d9edfa2a877176cdf6fad5.json

Note: The warning about the missing /etc/kubernetes/apiserver-url.env file is expected. Platforms may use that env file to specify the API server URL, which will be picked up by the credential provider. Otherwise, it falls back to localhost:6443.

CRI-O logs

CRI-O then picks up the auth file and successfully pulls from the mirror. The CRI-O debug logs show it discovering and using the namespace-scoped auth file:

DEBU[...] Looking for namespaced auth JSON file in /etc/crio/auth for image docker.io/nginx

DEBU[...] Using auth file for namespace default: /etc/crio/auth/default-7e59ad64326bc321517fb6fc6586de5ee149178394d9edfa2a877176cdf6fad5.json

INFO[...] Trying to access "localhost:5000/library/nginx:latest"

INFO[...] Pulled image: docker.io/library/nginx@sha256:5ff65e8820c7fd8398ca8949e7c4191b93ace149f7ff53a2a7965566bd88ad23

Registry logs

The registry logs confirm the authenticated request:

time="..." level=info msg="authorized request" http.request.host="localhost:5000" http.request.method="GET" http.request.uri="/v2/" http.request.useragent="cri-o/1.34.0" auth.user.name="myuser"

time="..." level=info msg="authorized request" http.request.host="localhost:5000" http.request.method="GET" http.request.uri="/v2/library/nginx/manifests/latest" http.request.useragent="cri-o/1.34.0" auth.user.name="myuser"

time="..." level=info msg="authorized request" http.request.host="localhost:5000" http.request.method="GET" http.request.uri="/v2/library/nginx/blobs/sha256:..." http.request.useragent="cri-o/1.34.0" auth.user.name="myuser"

Success! The pod starts and the image was pulled from the private mirror using only namespace-scoped credentials, with no global credentials required.

Security considerations

The security model relies on Kubernetes’ existing RBAC system combined with service account token scoping. The fundamental security property is that each pod can only access secrets in its own namespace. This is enforced through multiple layers: the service account token is namespace-scoped, the Kubernetes API server enforces RBAC when the credential provider queries for secrets, and the auth file is cleaned up by CRI-O after the image pull completes.

Consider a threat scenario where a compromised pod in namespace A attempts to access credentials from namespace B. The attack fails because the service account token in the pod only grants access to namespace A, so the Kubernetes API server rejects attempts to list secrets in namespace B. Even if the attacker could somehow invoke the credential provider directly, it would only retrieve namespace A’s secrets. This maintains defense in depth where credentials never exist in a location accessible to other namespaces.

The auth files themselves are short-lived. They are created on-demand when an image pull begins, used by CRI-O during the pull operation, atomically moved to a temporary location during concurrent pulls to prevent race conditions, and deleted after the pull completes. This minimizes the window during which credentials exist on disk.

Conclusion

The CRI-O credential provider enables namespace-scoped authentication for private registry mirrors, maintaining security boundaries while preserving the performance benefits of mirrors. The solution leverages existing Kubernetes primitives like service account tokens, secrets, and RBAC. This makes it a natural fit for multi-tenant clusters.

In Part II (coming soon), we’ll see how platforms like OKD and OpenShift are integrating this capability natively, simplifying deployment through declarative APIs.