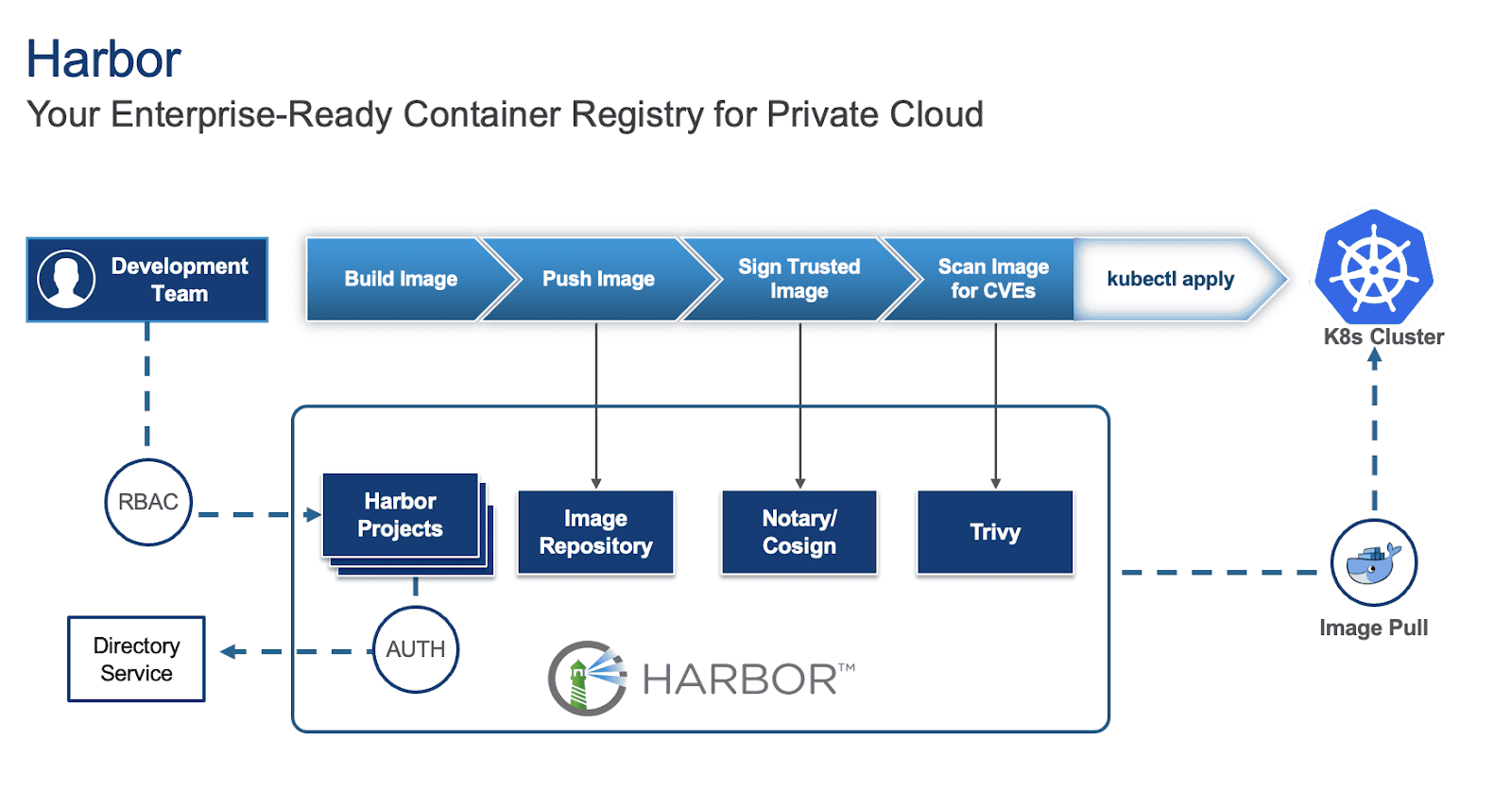

Harbor is an open-source container registry that secures artifacts with policies and role-based access control, ensuring images are scanned for vulnerabilities and signed as trusted. To learn more about Harbor and how to deploy it on a Virtual Machine (VM) and in Kubernetes (K8s), refer to parts 1 and 2 of the series.

While deploying Harbor is straightforward, making it production-ready requires careful consideration of several key aspects. This blog outlines critical factors to ensure your Harbor instance is robust, secure, and scalable for production environments.

For this blog, we will focus on Harbor deployed on Kubernetes via Helm as our base and provide suggestions for this specific deployment.

1. High Availability (HA) and scalability

For a production environment, single points of failure are unacceptable, especially for an image registry that will act as a central repository for storing and pulling images and artifacts for development and production applications. Thus, implementing high availability for Harbor is crucial and involves several key considerations:

- Deploy with an Ingress: Configure a Kubernetes Service of type Ingress controller (e.g. Traefik) in front of your Harbor instances to distribute incoming traffic efficiently and provide a unified entry point along with cert-manager for certificate management. You can specify this in your values.yaml file under:

expose:

type: ingress

tls:

enabled: true

certSource: secret

ingress:

hosts:

core: harbor.yourdomain.com

annotations:

# Specify your ingress class

kubernetes.io/ingress.class: traefik

# Reference your ClusterIssuer (e.g., self-signed or internal CA)

cert-manager.io/cluster-issuer: "harbor-cluster-issuer"

To locate your values.yaml file, refer to the previous blog.

- Utilize multiple Harbor instances: Increase the replica count for critical Harbor components (e.g., core, jobservice, portal, registry, trivy) in your values.yaml to ensure redundancy.

core:

replicas: 3

jobservice:

replicas: 3

portal:

replicas: 3

registry:

replicas: 3

trivy:

replicas: 3

# While not strictly for the HA of the registry itself, consider increasing exporter replicas for robust monitoring availability

exporter:

replicas: 3

# Optionally, if using Ingress, consider increasing the Nginx replicas for improving Ingress availability

nginx:

replicas: 3

Configure shared storage: For persistent data, configure Kubernetes StorageClasses and PersistentVolumes to use shared storage solutions like vSAN or a distributed file system. Specify these in your values.yaml under:

persistence:

enabled: true

resourcePolicy: "keep"

persistentVolumeClaim:

registry:

#If left empty, the kubernetes cluster default storage class will be used

storageClass: "your-storage-class"

jobservice:

storageClass: "your-storage-class"

database:

storageClass: "your-storage-class"

redis:

storageClass: "your-storage-class"

trivy:

storageClass: "your-storage-class"

- Enable database HA (PostgreSQL): While Harbor comes with a built-in PostgreSQL database, it is not recommended for production use as it:

- Lack of high availability (HA): The default internal PostgreSQL setup within the Harbor Helm chart is typically a single instance. This creates a single point of failure. If that database pod goes down, your entire Harbor instance will be unavailable.

- Limited scalability: An embedded database is not designed for independent scaling. If your Harbor usage grows, you might hit database performance bottlenecks that are difficult to address without disrupting Harbor itself.

- Complex lifecycle management: Managing backups, point-in-time recovery, patching, and upgrades for a stateful database directly within an application’s Helm chart can be significantly more complex and error-prone than with dedicated database solutions.

Thus, it is recommended to deploy a highly available PostgreSQL cluster within Kubernetes (e.g., using a Helm chart for Patroni or CloudNativePG) or leverage a managed database service outside the cluster. Configure Harbor to connect to this HA database by updating the values.yaml:

database:

type: "external"

external:

host: "192.168.0.1"

port: "5432"

username: "user"

password: "password"

coreDatabase: "registry"

# If using an existing secret, the key must be "password"

existingSecret: ""

# "disable" - No SSL

# "require" - Always SSL (skip verification)

# "verify-ca" - Always SSL (verify that the certificate presented by the

# server was signed by a trusted CA)

# "verify-full" - Always SSL (verify that the certification presented by the

# server was signed by a trusted CA and the server host name matches the one

# in the certificate)

sslmode: "verify-full"

Implement Redis HA: Deploy a highly available Redis cluster in Kubernetes (e.g., using a Helm chart for Redis Sentinel or Redis Cluster) or utilize a managed Redis service. Configure Harbor to connect to this HA Redis instance by updating redis.type and connection details in values.yaml.

redis:

type: external

external:

addr: "192.168.0.2:6397"

sentinelMasterSet: ""

tlsOptions:

enable: true

username: ""

password: ""

2. Security best practices

Security is paramount for any production system, especially a container registry.

Enable TLS/SSL: Always enable TLS/SSL for all Harbor components.

expose:

tls:

enabled: true

certSource: auto # change to manual if using cert-manager

auto:

commonName: ""

internalTLS:

enabled: true

strong_ssl_ciphers: true

certSource: "auto"

core:

secretName: ""

jobService:

secretName: ""

registry:

secretName: ""

portal:

secretName: ""

trivy:

secretName: ""

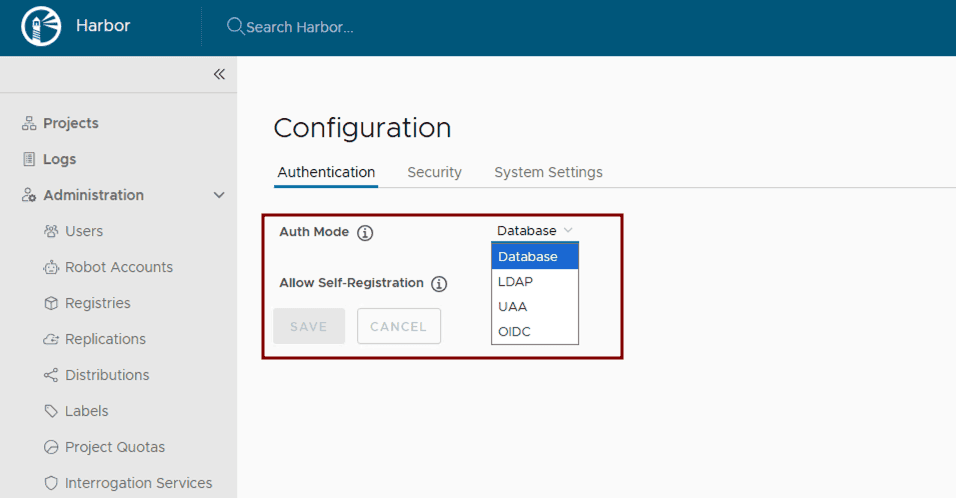

Configure authentication and authorization: Leverage Harbor’s supported Authentication and Authorization mechanisms for managing access to Harbor resources. After Harbor deployment, integrate Harbor with enterprise identity providers like LDAP or OIDC by following the Harbor configuration guides: Configure LDAP/Active Directory Authentication or Configure OIDC Provider Authentication.

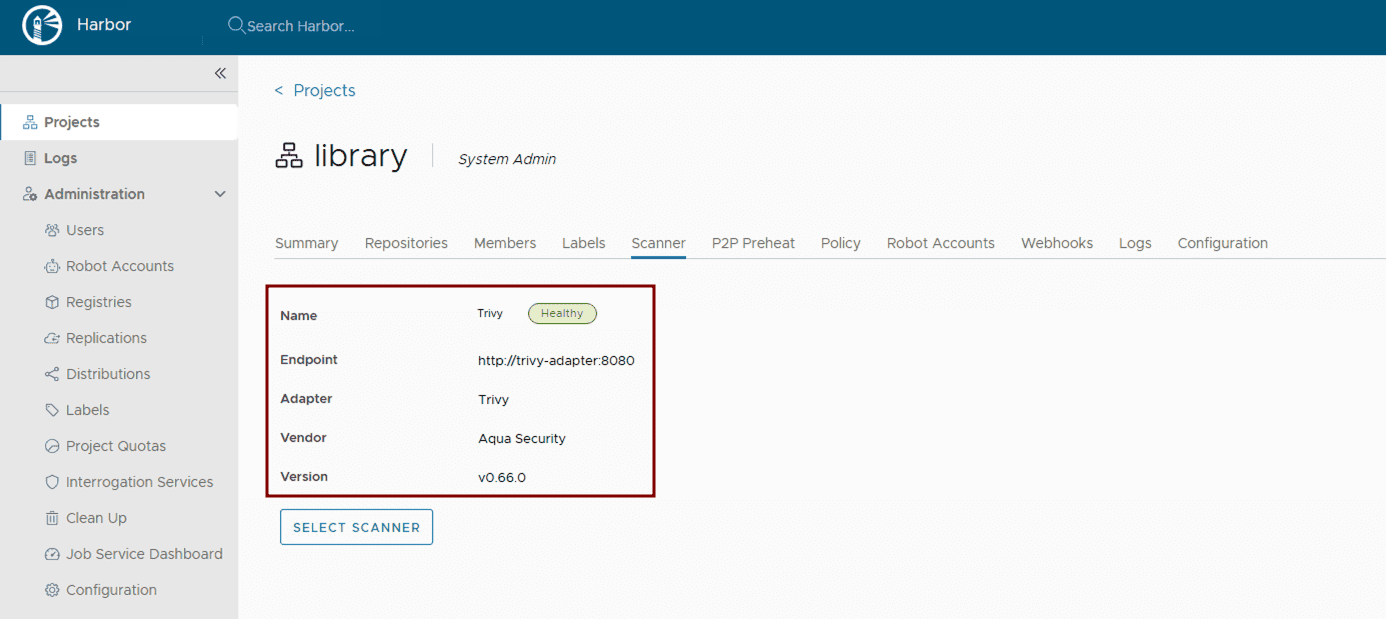

Implement vulnerability scanning: Ensure vulnerability scanning is enabled in values.yaml. Harbor uses Trivy by default. Verify its activation and configuration within the Helm chart.

trivy:

enabled: true

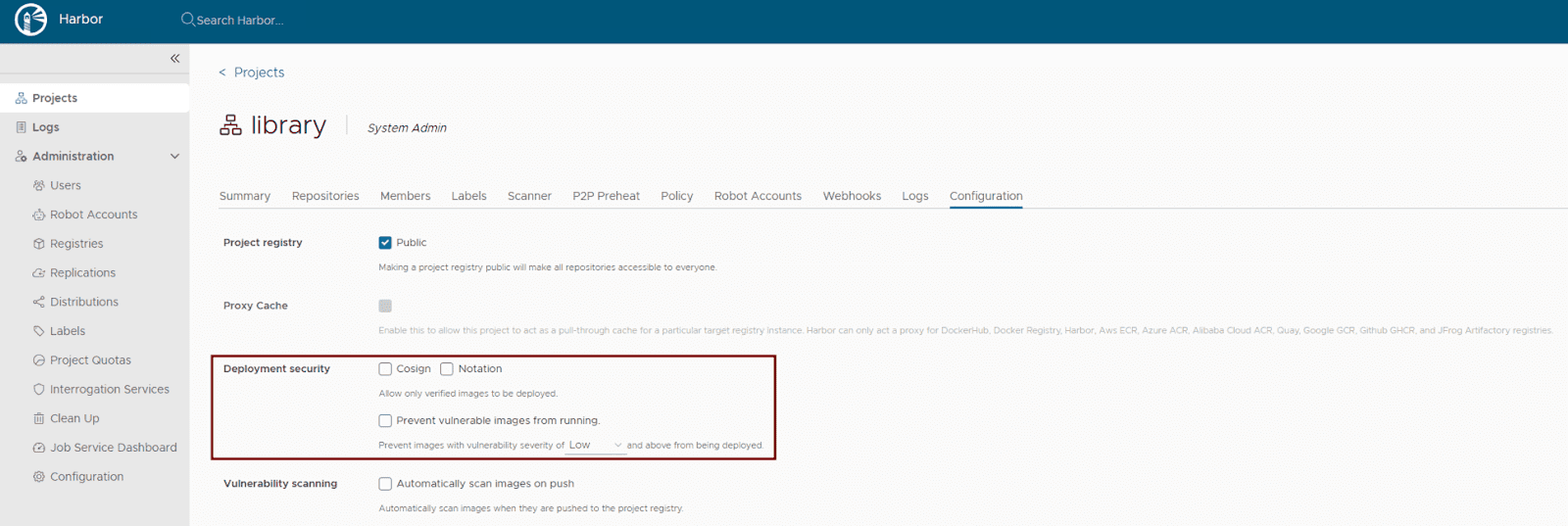

Activate content trust: Harbor supports multiple content trust mechanisms to ensure the integrity of your artifacts. For modern OCI artifact signing, Cosign and Notation are recommended. Enforce deployment security at the project level within the Harbor UI or via the Harbor API to allow only verified images to be deployed. This ensures that only trusted and cryptographically signed images can be deployed.

- Maintain regular updates: Regularly update your Harbor Helm chart and underlying Kubernetes components to benefit from the latest security patches and bug fixes. Use helm upgrade for this purpose.

- Use robot accounts for automation: Use robot accounts (service accounts) in automation such as CI/CD pipelines to avoid using user credentials. This ensures the robot account with the least required privileges is used to perform the specific task it has been created for, ensuring limited scope.

- Fine grained audit log: In Harbor v2.13.0, Harbor supports the re-direction of specific events in the audit log. For example, an “authentication failure” event can be configured in the audit log and forwarded to a 3rd party syslog endpoint.

3. Storage considerations

Efficient and reliable storage is critical for Harbor’s performance and stability.

- Choose appropriate storage type: Define Kubernetes StorageClasses that align with your underlying infrastructure (e.g., nfs-client, aws-ebs, azure-disk, gcp-pd). Specify these settings in your values.yaml:

persistence:

enabled: true

resourcePolicy: "keep"

imageChartStorage:

#Specify storage type: "filesystem", "azure", "gcs", "s3", "swift", "oss"

type: ""

#Configure specific storage type section based on the selected option

- Estimate storage sizing: Carefully calculate your storage needs based on the anticipated number and size of container images, as well as your defined retention policies. Configure the size for your PersistentVolumeClaims in values.yaml.

- Implement robust backup and recovery: Establish a comprehensive backup strategy for all Harbor data. For Kubernetes-native backups, consider using tools like Velero to back up PersistentVolumes and Kubernetes resources. For object storage, leverage the cloud provider’s backup mechanisms or external backup solutions. Regularly test your recovery procedures.

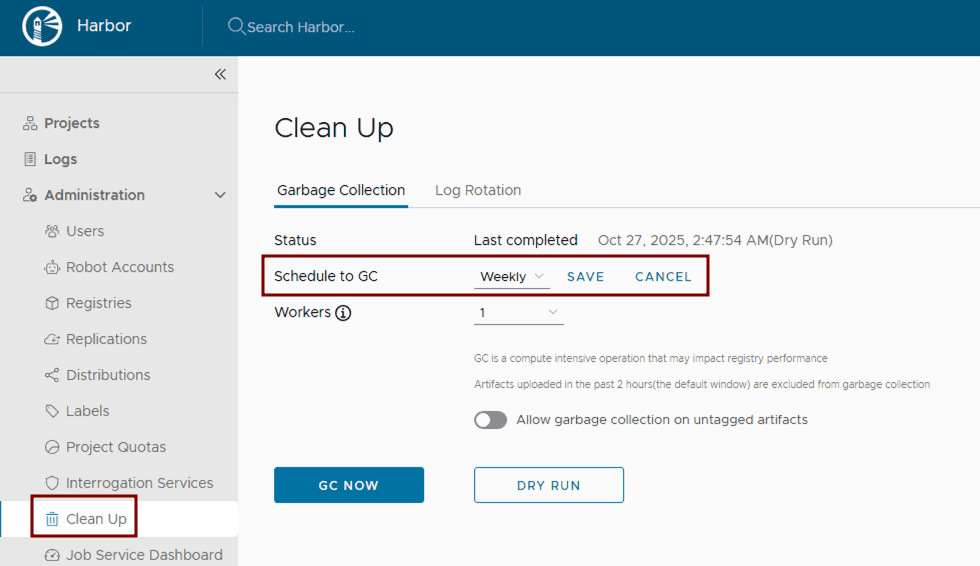

- Configure and run garbage collection: Set up and routinely execute Harbor’s garbage collection. This can be configured through the Harbor UI by defining a schedule for automated runs to remove unused blobs and efficiently reclaim storage space.

4. Monitoring and alerting

Proactive monitoring and alerting are essential for identifying and addressing issues before they impact users.

Collect Comprehensive Metrics: Deploy Prometheus and configure it to scrape metrics from Harbor components. The Harbor Helm chart exposes Prometheus-compatible endpoints in the values.yaml file. Visualize these metrics using Grafana.

metrics:

enabled: true

core:

path: /metrics

port: 8001

registry:

path: /metrics

port: 8001

jobservice:

path: /metrics

port: 8001

exporter:

path: /metrics

port: 8001

serviceMonitor:

enabled: true

# This label ensures the prometheus operator picks up these monitors

additionalLabels:

release: kube-prometheus-stack

# Example Service Monitor objects:

# Harbor Core (API and Auth Performance)

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: harbor-core

labels:

app: harbor

release: kube-prometheus-stack

spec:

selector:

matchLabels:

app: harbor

component: core

endpoints:

- port: metrics # Defaults to 8001

path: /metrics

interval: 30s

# Harbor Exporter (Business Metrics)

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: harbor-exporter

labels:

app: harbor

release: kube-prometheus-stack

spec:

selector:

matchLabels:

app: harbor

component: exporter

endpoints:

- port: metrics

path: /metrics

interval: 60s # Scraped less frequently as these are high-level stats

- Centralized logging: Implement a centralized logging solution within Kubernetes, such as the ELK stack (Elasticsearch, Logstash, Kibana) or Grafana with Fluentd/Fluent Bit.

- Configure critical alerts: Set up alerting rules in Prometheus (Alertmanager) or Grafana for critical events, such as component failures, high resource utilization (CPU/memory limits), storage nearing capacity, failed vulnerability scans, or unauthorized access attempts. Define these thresholds based on your production requirements.

5. Network configuration

Proper network configuration ensures smooth communication between Harbor components and external clients.

- Configure ingress or load balancer and DNS resolution: As already mentioned, deploy a Kubernetes Ingress controller or Load Balancer to expose Harbor externally. Ensure proper DNS records are configured to point to your Load Balancer’s IP address.

- Set Up proxy settings (if applicable): If Harbor components need to access external resources through a corporate proxy, configure proxy settings within values.yaml. It’s crucial to note that the proxy.components field explicitly defines which Harbor components (e.g., core, jobservice, trivy) will utilize these proxy settings for their external communications.

proxy:

httpProxy:

httpsProxy:

noProxy: 127.0.0.1,localhost,.local,.internal

components:

- core

- jobservice

- trivy

- Allocate sufficient bandwidth: Ensure your Kubernetes cluster’s underlying network infrastructure and nodes have sufficient bandwidth to handle peak image pushes and pulls. Monitor network I/O on nodes running Harbor pods.

Conclusion

By diligently addressing these considerations, you can transform your basic Harbor deployment into a robust, secure, and highly available production-ready container registry. This approach ensures that Harbor serves as a cornerstone of your cloud-native infrastructure, capable of supporting demanding development and production workflows. From implementing High Availability and stringent security measures to optimizing storage and establishing proactive monitoring, each step contributes to a resilient and efficient artifact management system.

Continue reading the Harbor Blog Series on cncf.io:

Blog 1 – Harbor: Enterprise-grade container registry for modern private cloud