Guest post by Nick Palumbo and Lewis Macdonald from Bloomberg

Over the last decade, numerous workflow orchestration platforms have become popular in the technology community. The need to run logical flows of tasks in order to move from a starting point to a desired end state is very common, and companies and communities have developed quite the set of varied solutions. They all have a lot to offer, but we’ll be focusing on what features we were looking for at the outset, and why we ultimately chose Argo.

What we needed from an orchestration platform

In order to choose any technology, you first need to ask yourself what you actually are trying to accomplish (aka what problem are you trying to solve?). The discussion that ultimately led us to Argo started with our Data Science Runtimes team. We were looking to create a system that would allow us to coordinate data processing, machine learning model training, evaluation, and serving. Furthermore, we wanted our ML engineers to be able to execute this training pipeline continuously.

The discussion grew as neighboring teams brought up ways that they could benefit from an orchestration system. One team wanted to be able to run a series of tasks that would copy/backup/restore databases. Another was looking to be able to deploy service configurations and trigger restarts. Yet another wanted to be able to package and publish artifacts of code as part of our deployment pipeline.

In the end, the need became clear: we needed a generic workflow orchestration system that would allow us to trigger and schedule related, discrete tasks that may have dependencies or even be conditional on one another. Furthermore, we wanted to build out the system as a managed platform — as something where a centralized team could manage the architecture and interfaces. This would create a consistent experience for the company’s 6,500+ engineers, avoid a proliferation of application teams having to support and manage orchestration infrastructure, condense upgrades and the need for debugging/troubleshooting into one location, and allow users to focus on their workflows and business logic.

Choosing a standout among standouts

Great! We had hammered out the problem that needed solving. But, as we had mentioned earlier, there are a lot of available frameworks that solve the problem of workflow orchestration. To really find the one that worked for us, we needed to dig a little deeper. Because of the well-established communities around them, we focused our effort on comparing three main technologies: Apache Airflow, Argo, and Tekton.

All three of these technologies solve this problem, and they solve it well. However, the desire to build out a managed platform on top of one of the technologies helped us start to differentiate them. While Airflow is a pillar in this space (and is already used by many teams here), it wasn’t best-suited for our specific requirements for this initiative. Our managed compute infrastructure is primarily built on Kubernetes, and Airflow is the only one of the three which does not run Kubernetes-native. As a result, this means it’s missing out on some convenient features of Kubernetes for a multi-tenant platform — specifically, namespacing and dynamic scaling, two features we will cover later.

Between Tekton and Argo, it became even harder to make a decision. They’re both cloud native and have strong communities around them. That said, at the time we were making this discussion, Argo had a couple things going for it that made it stand out as a framework. It’s the basis for Kubeflow, which is a production-grade tool available in both AWS and GCP. Its glut of useful features, which we will cover shortly, reflect that. Furthermore, it’s incubated at the Cloud Native Computing Foundation (CNCF), which is a promising sign for its community and support.

In the end, Argo fit the bill best for what we were looking for. While all three of the technologies mentioned have their own strengths and provide these features in some ways, below are the top 5 factors we’d like to highlight that made Argo really stand out for us.

Scalability

Argo runs natively in Kubernetes, which means that it scales readily. The Argo controller and server are lightweight processes that represent a very low operational overhead for managing large numbers of workflows. If you have a lot of workflows, you might simply bump up `–workflow-workers` in the workflow controller (see the Argo docs on scaling) and increase the compute power in your cluster or add more nodes.

Bloomberg’s Kubernetes footprint scales to hundreds of clusters across several availability zones that underpin our compute and data infrastructure. We need to manage various tenants’ workflows across varied use cases from Deep Learning to infrastructure management. Taking things a step further, in the next section on multi-tenancy, we’ll discuss namespaced mode, which helps achieve tenant isolation and also introduces the possibility of adding more horizontal scaling into our system.

Multi-tenancy

We absolutely need a clean multi-tenancy structure for our workflow platform. With dozens to hundreds of teams potentially making use of our system to orchestrate their tasks, we need to ensure that 1) our users do not impact one another and that 2) our users can have total confidence that their secrets, workflow history, etc., are only accessible securely to the respective team.

Kubernetes has a native concept of namespaces, which facilitate a level of isolation between functional groups in a single Kubernetes cluster. Argo deploys workflows into any given namespace. This means that if a bad (or confused) actor manages to muck things up in their namespace, it won’t affect the other tenants. It also means that we can repeatedly apply the same pattern of access for secrets, permissions, etc., across our tenants’ environments and have a high level of confidence that the systems are isolated from one another.

As an extension of this, Argo ships with a feature that facilitates multi-tenancy in another big way: namespaced mode. This mode can ship instances of the Argo Server and the Workflow Controller into independent namespaces. This effectively means that each tenant has their own dedicated orchestration system. With our multi-tenancy architecture, teams can use a self-service system to request and spin up their own namespace, complete with a full Argo installation and isolated access control, giving them a managed workflow orchestration platform at the click of a button.

Security

Security considerations take many forms – from the fundamental architecture of our software and how we secure vast amounts of sensitive data to the security of our container images and deployment processes. The Argo project takes Common Vulnerabilities and Exposures (CVEs) seriously — something we consider a critical factor when evaluating our technology supply chain.

The Argo server recently added built-in SSO capabilities to allow different tenants to have different access levels (e.g., cluster admins, tenant users, and superusers). When layered with Kubernetes RBAC, this gives us fine-grained control over who can do — and see — what, both programmatically and via the UI.

Finally, container image security is another area where being cloud-native helps Argo stand out. It is critical that we can control the images deployed in our users’ workflows and ensure they have gone through a rigorous internal CI/CD process — something we enforce using Kubernetes admission webhooks.

Ease-of-Use

The most important factor for a new technology platform to be adopted at any company is likely its ease-of-use. We use this term broadly, as it encompasses the user experience, the ease of integrating it with existing systems, and the effort required to set up new workflows. Fortunately for us, Argo covers all three of these requirements really well.

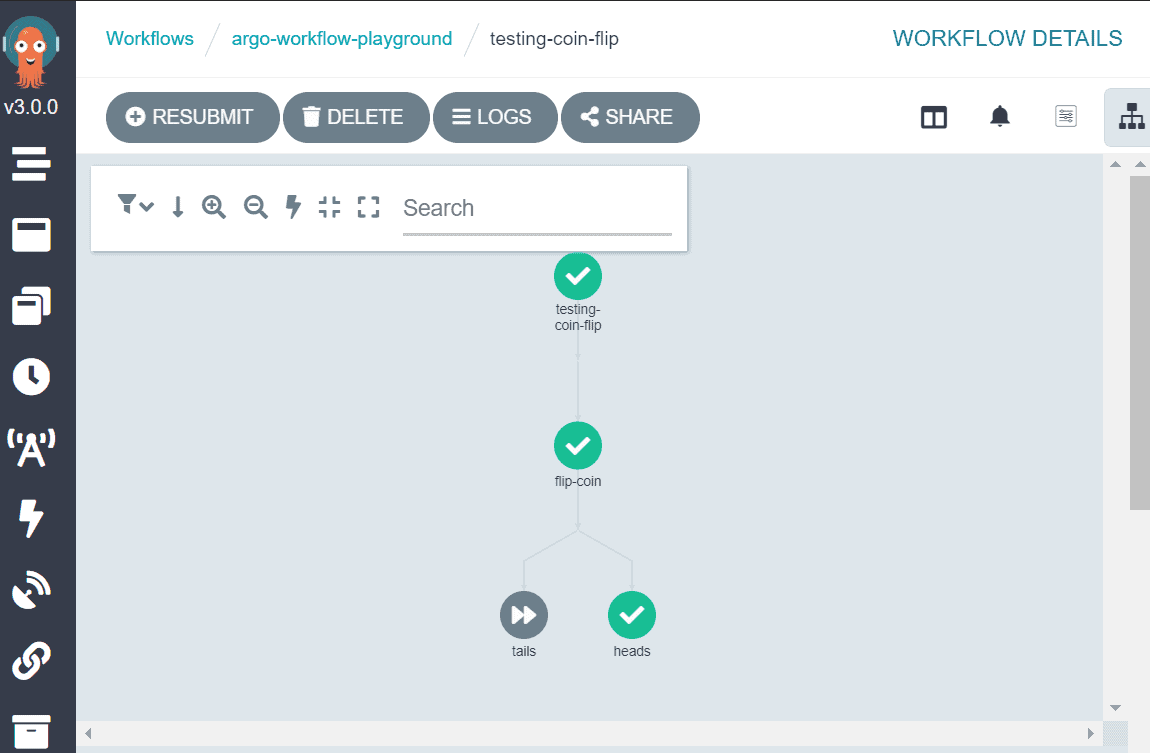

From a user experience perspective, Argo provides a clean, user-friendly interface to view and submit workflows, view workflow history, and manage templates. Plus, as of Argo v3.0.0, it even includes Argo Events.

In terms of system integration and workflow building, Argo offers a handful of crucial features that make these things a breeze. The first is its rich REST API, which enables a client to do quite literally anything they need to manage their orchestration service, including creating and updating workflow templates or pausing and resuming active workflows. Furthermore, since Argo runs your workflows as Kubernetes pods, everything is container-based. This means that any code that has already been containerized can immediately be made into a step of a workflow. Given the broad adoption of containers across the tech industry, this makes moving to Argo a snap.

Another notable differentiator for Argo as a workflow orchestration platform is that its DAGs are defined as YAML files. YAML provides a declarative format that is designed to be intuitive and human readable, meaning that one does not necessarily need to have engineering skills to be able to generate a new workflow! This also makes Argo pretty flexible in terms of interacting with it via an application. In addition to its officially supported Java and Go libraries, you can also make workflows by generating YAML files (see this blog post on using Python to author workflows).

Flexibility/Dynamism

There are many different use case patterns that we look to support in our workflow platform. This includes operations like scheduled database copies, on-demand ML pipelines, or event-driven data processing based on, say, S3 bucket notifications. Given the diverse nature of tasks we need to support, there are a couple of key things Argo supports out-of-the-box that made it a top option for us.

Argo supports the generation of dynamic DAGs for its workflows, even at run-time. This means we can map the output from one step in our workflow to any number of subsequent steps. Imagine a workflow that seeks to apply a database configuration change to a set of clusters. It may not be easy to know at initialization which nodes are running the database, and we might need to ask a remote server, “hey, where should I apply this configuration?” With a dynamic DAG, we can automate this into a workflow: one step to request the list of nodes and another step that is generated for each node based on the output of the prior one. In Argo, we can even run each of these steps in parallel!

Another core component that gives Argo a high level of flexibility is the native Argo Events system. It comes with support for triggering workflows (among other things) from many different event sources, including crucial things like HTTP requests and Apache Kafka messages. This allows us to easily integrate Argo-driven workflows with existing or new processes, opening up numerous possibilities for more complex pipelines.

Conclusion

We could go on and on about the numerous features that make Argo an attractive option for our workflow orchestration. There’s its native Prometheus metrics that enables quick, out-of-the-box monitoring, its support for failure callbacks that facilitate alerting into email or other messaging platforms, or even cluster workflow templates that allow us to build out a generic library of operators to support many clients… and more! But, for now, we’ll leave it at the five we mentioned.

When exploring the universe of workflow orchestration, it’s easy to get overwhelmed by the sheer number of options available. Due to its native cloud/Kubernetes support, its impressive set of features, its growing open source community, and its incubation under the CNCF’s open governance model, we opted for Argo. That said, you should always consider your project’s specific needs and exactly what you’re trying to build before making a decision for your organization. Of course, we’d certainly encourage you to check out Argo yourself.

About

Nick is a Senior Software Engineer on the Data Science Runtimes team, where he is building a managed platform for workflow orchestration.

Lewis is a Software Engineer on the Data Science Runtimes team, where he works on distributed compute platforms used by the company’s engineers.