Guest post by Kiran Mova, Co-founder of MayaData and Chief Architect of OpenEBS

One of the best things about OpenSource communities is the expertise as well as complaints shared by users. This sharing of real-world experience helps us learn how others solve the problems we might be facing. The OpenEBS community is no different – many users have taken the time to fill out Adopters.md to share how they are using OpenEBS, the leader in Container Attached Storage (CAS), and how it is solving their common problems while leveraging Kubernetes for data.

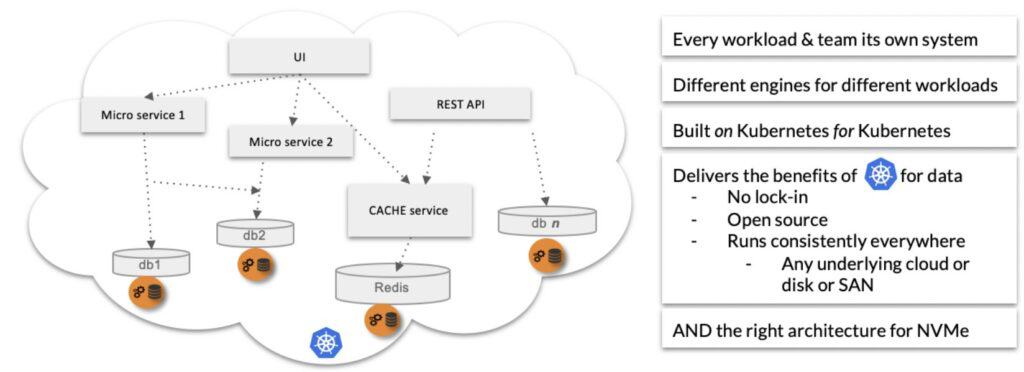

First, a little bit of background. Feel free to skip ahead if you already know what CAS is. In short, Container Attached Storage (CAS) disaggregates the storage system into many loosely coupled services that provide per workload, and per small team storage. These services function independently while offering higher levels of resilience through replicas, if any, stored locally and remotely. Due to its distributed nature, this approach fits well both for cloud-native architectures and organizational structures.

Originally developed by MayaData, OpenEBS is now a CNCF Sandbox project with a vibrant community of organizations and individuals alike.

OpenEBS includes:

- several flavors of local volume management,

- two versions of NFS for workloads requiring read-write-many, and

- the increasingly well-known high-performance OpenEBS Mayastor.

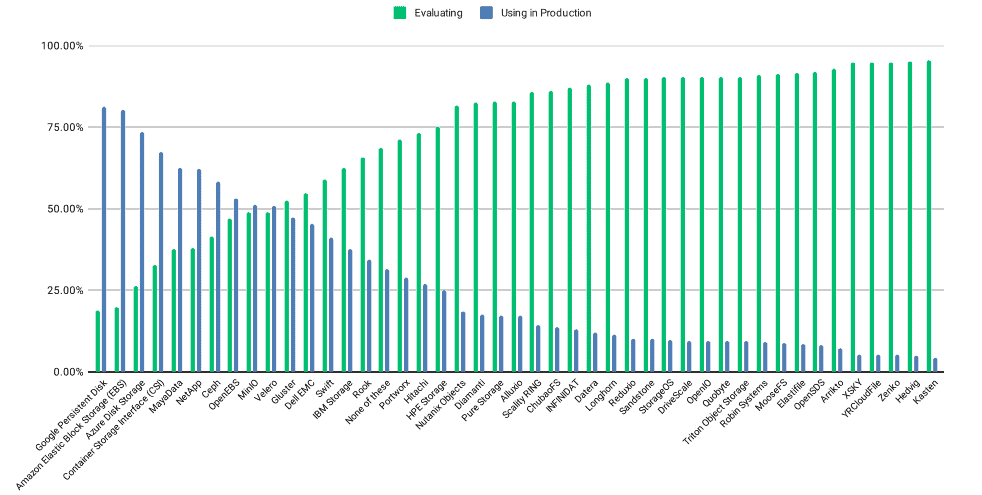

As the leading open-source Container Attached Storage software, OpenEBS has become a frequent storage solution for several Kubernetes deployments since its first release in early 2017. This was also evident from CNCF’s 2020 Survey Report that highlighted 55% of surveyed organizations already used stateful applications on production, while 12% of the remaining were evaluating, and 11% were planning to adopt its usage. The survey also highlighted MayaData (OpenEBS) in the top-5 list of popular storage solutions with more than 60% of its adopters already using it in production instances.

Reference Adopters

In this blog, we summarize some of the experiences of OpenEBS adopters who have shared their experiences. You can also read these directly – and contribute your own here: https://github.com/openebs/openebs/blob/master/ADOPTERS.md.

First, a quick preview – as you can see, OpenEBS has numerous use-cases as a storage solution – from home labs running on Raspberry Pis, to the backend for some of the largest eCommerce providers or financial analytics providers.

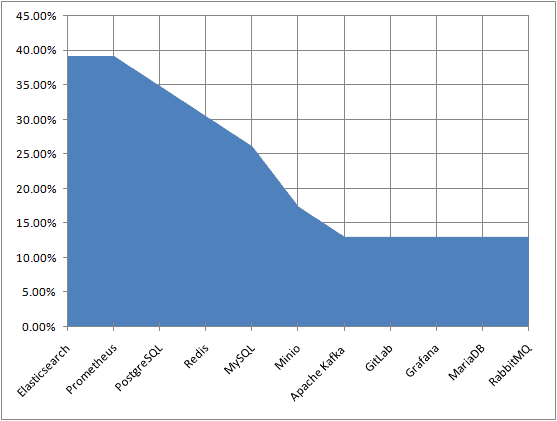

For this article, we collated the list of adopters and picked a few use-cases to highlight prominent workloads and the key features that helped organizations maintain efficient, stateful storage of both resilient and non-resilient workloads. While there were several data points that helped us gather insights of organizational use-cases, an interesting part was to notice the vast number of workloads over which OpenEBS is being used. Looking through the workloads, the most common that use OpenEBS are:

- Elasticsearch

- Prometheus

- PostgreSQL

A simple way to look at the most commonly used workloads graphically:

Note that both Elastic and Prometheus are also early stateful workloads for Kubernetes operators. Even Kubernetes deployments, which are otherwise not used for stateful workloads, often run Elastic and Prometheus with the care and feeding of the Kubernetes platform itself.

Arista Networks

As one of the early adopters, Arista’s use-case of using OpenEBS is particularly interesting as over time they have started to run multiple stateful applications including Gerrit (multiple flavors), NPM, Maven, Redis, NFS, Sonarqube, and a number of internal tools for storage.

Arista has been using OpenEBS across multiple cluster instances, including Dev, Staging, and Prod. Approximately 90% of their usage has been on the ZFS based OpenEBS cStor, and 10% of their usage has been on the older Jiva Storage Engine.

What Worked Well for Arista

- OpenEBS’ seamless support of Kubernetes Rolling Upgrades; particularly in cases of maintaining master node majority without requiring all replicas/nodes to be online.

- Automatic redeployment of degraded replicas without operator involvement

- Dispersed volumes across multiple nodes of a cluster

- Allowing developers to focus on core development rather than worrying about storage architecture

- OpenEBS requires a comparatively lesser number of nodes to operate when compared to other popular storage solutions.

- OpenEBS allows extensive custom configuration of storage nodes through NDM and cStor Pool – enabling control over the underlying nodes.

- Single line command deployment through helm install

- OpenEBS decouples application nodes from storage nodes for isolated maintenance, optimization, and efficient disaster recovery.

- OpenEBS leverages iSCSI’s storage networking protocol for decoupling storage and applications.

CodeWave delivers scalable custom web solutions and operates cloud-based infrastructure for its clients across the globe. Most of its application workloads are maintained in bare-metal clusters with some nodes on Kernel-based virtual machines.

CodeWave uses OpenEBS’s Jiva storage engine over its staging and production instances for a number of stateful applications, including:

- Bitwarden,

- Bookstack,

- Allegros Ralph,

- LimeSurvey,

- Grafana,

- Hackmd/Codimd,

- MinIO,

- Nextcloud,

- Percona XtraDB Cluster Operator,

- Nextcloud,

- SonarQube,

- Sentry, and

- JupyterHub.

What Worked Well for CodeWave

CodeWave found ease of use and stability on clusters as the most prominent advantages that gave OpenEBS the edge over other storage solutions. In its use-case, CodeWave also confirmed the extensive use of OpenEBS as its default Storage Class across multiple layers, including – Persistent Databases, Live file storage, and backups.

Cloud Native Computing Foundation (CNCF)

CNCF is one of the largest non-profit projects operated by the Linux Foundation, with its objective to build and drive adoption of emerging cloud-native frameworks and tools. As one of the flag-bearers of cloud-native advocacy, CNCF focuses towards the growth and adoption of an ecosystem of open-source, vendor-neutral projects. Over the years, CNCF has enabled several open-source projects, including Kubernetes, Prometheus, and Envoy Proxy to help nurture them and aid a wider adoption.

Apart from being an enabler by including OpenEBS as one of its Sandbox projects, CNCF and Linux Foundation also continue to be users of OpenEBS on both Test and Production instances for a number of stateful applications, including:

CNCF:

- PostgreSQL (including automatic backups)

- NFS server (for allowing multiple r/w access)

- Nginx (serving home pages, backups, CSV reports, etc.)

- Storage for Git repositories clones

- All DevStats applications (homegrown)

The Linux Foundation:

- MariaDB database (Helm master + slave replication, automatic backups)

- ElasticSearch cluster (Helm chart master + 4 slave nodes)

- Postgres (including automatic backups)

- Redis

- Grimoire stack (CHAOSS)

- Storage for Git repositories clones

- Homegrown tools (SDS – sync data sources, Golang orchestrator to fetch Grimoire data)

What Worked Well for CNCF

In its use-case, CNCF confirmed using bare-metal servers for all of its stateful applications, along with the OpenEBS Local PV storage engine for stateful DB storage due to its operating speed. In addition, CNCF also uses NFS servers (with Local Persistent Volume underlying) for allowing multiple clients access. In conclusion, CNCF confirmed using OpenEBS as the storage solution for everything installed on Kubernetes.

The list of CNCF/Linux Foundation sites currently using OpenEBS for storage:

- https://devstats.cncf.io (and all subprojects) – Prod Instance

- https://teststats.cncf.io (and all subprojects) – Test Instance

- https://devstats.coreinfrastructure.org

- https://devstats.graphql.org

- https://devstats.cd.foundation

- https://devstats.cncf.io/backups (Backups)

- DevStats REST API: https://devstats.cncf.io/api/v1 (Details Here)

ByteDance

ByteDance is an internet company that operates a range of technology-enabled content platforms across the globe. Through a number of popular platforms, such as TikTok, ByteDance enables people to connect and create content using emerging technologies.

ByteDance is one of OpenEBS’ enterprise adopters who have been using it on an Elasticsearch stateful workload, and are now evaluating for broader usage.

What Worked Well for ByteDance

ByteDance uses OpenEBS Local PV storage engine to create persistent volumes out of local disks or host paths on worker nodes. This allows ByteDance to achieve performance equivalent to either the local disk or the file system (host path) on which the volumes are created. In addition, ByteDance has taken advantage of OpenEBS’ Dynamic Provisioning feature while claiming that the feature has helped them save lots of time on setting and maintaining storage disks within an Elasticsearch cluster.

A quick snapshot of some of OpenEBS Enterprise adopters and their workloads.

| Enterprise | Stateful Workload | ||||

| Elasticsearch | Prometheus | PostgreSQL | Redis | MySQL | |

| Arista Networks | Yes | ||||

| ByteDance | Yes | ||||

| CNCF, The Linux Foundation | Yes | Yes | Yes | ||

| KubeSphere | Yes | Yes | Yes | Yes | |

| Optoro | Yes | Yes | Yes | Yes | Yes |

| Orange | Yes | Yes | Yes | Yes |

Closing Thoughts

Through this article, we not only wished to highlight use-cases shared by OpenEBS adopters but also to express our gratitude to its vibrant community for its consistent feedback and support. This has collectively helped us develop a solution that addresses teams’ real needs to run stateful workloads with more agility, resilience, cost savings, and freedom from cloud and storage lock-in.

As OpenEBS continues to be the default storage solution for a vast number of workloads and its successful use-cases, particularly with open-source databases on Kubernetes, we’re confident that the growing adoption of OpenEBS will continue for years to come.

Resources:

To read Adopter use-cases or contribute your own, visit: https://github.com/openebs/openebs/blob/master/ADOPTERS.md.

Many of our users are active on the Kubernetes #OpenEBS channel. Feel free to join us, ask questions, and share your experience:https://openebs.io/community

For those who are interested in Rust and the performance of data on Kubernetes, there is also a discord server dedicated for OpenEBS Mayastor: https://discord.gg/zsFfszM8J2