KubeCon + CloudNativeCon sponsor guest post from Alaa Youssef, manager of the Container Cloud Platform at IBM Research

AI Workloads on The Cloud

The use of container clouds orchestrated by Kubernetes, for the execution of AI (Artificial Intelligence) and ML (Machine Learning) workloads has led many to question the suitability and effectiveness of Kubernetes’ resource management and scheduling to meet all the requirements imposed by these workloads.

To begin with, some of the typical machine learning and deep learning frameworks, such as Spark, Flink, Kubeflow, FfDL, etc., require multiple learners or executors to be concurrently scheduled and started in order for the application to be able to run. Also, co-locating these workers on the same node, or placing them on topologically close nodes, is desired to minimize the communication delays of large data sets. More recent trends show tendency towards massively parallel and elastic jobs, where a big number of short running tasks (minutes, seconds, or even less) is spawned to consume the available resources. The more resources can be dedicated to start more parallel tasks the faster the job will finish. In the end the amount of consumed resources is going to be more or less the same, but it is the overall job response time that is going to be impacted negatively if the allocated resources are constrained. In an offline processing mode, the response time is not a critical factor to the end user.

New use cases have also emerged where users increasingly use these frameworks to gain insights interactively, online; performing interactive exploration of data, as well as online processing. For example, using a Jupyter notebook, a data scientist may issue commands to start a ML job to analyze a big data set waiting for the results to start a subsequent processing job, in an interactive session.

Managing Jobs vs Tasks

When dealing with big numbers of AI/ML jobs submitted on daily or even hourly basis, high variability in the demand for resources is created. Some of these jobs may arrive almost simultaneously, or create a backlog of work, and require an arbitration policy that decides which job goes first given the current cloud resources available. Job priorities, classes of service, and user quotas, enable the formulation of meaningful policies, from users’ perspective, and linking them to the charging model. For scarce resource, such as GPUs, the ability to buffer and prioritize jobs, as well as to enforce quotas, becomes more crucial. Note that whole jobs, as opposed to individual tasks, is the subject of queuing and control, since it does not make much sense to focus only on individual tasks, as the partial execution of tasks from multiple jobs may lead to partial deadlocks, and many jobs may be simultaneously active while none is able to proceed to completion.

It’s Only a Cloud!

We often hear the term “the sky is the limit” to refer to unlimited amount of something. Cloud enthusiasts like to think of cloud resources as infinite with no limit! They often praise the elasticity of the cloud and its ability to absorb any resource demand whatsoever. While this is an ideal model to aspire for, one must balance it with the reality that cloud operators face, which is having to operate profitable businesses that offer reasonable pricing of offered resources and services. These prices cannot be reasonable if the cloud provider has to own seemingly infinite resources. In fact, minimizing their cost starts with operating their physical resources (hardware) at higher utilization points. Luckily, the common wisdom phrase did not state that the cloud is the limit but rather that the sky is.

At the cluster level, cluster owners may request to expand or shrink their cluster resources, and all providers offer the ability to achieve that. However, response times vary. Typically, it is on the order of minutes, and depends on how many additional worker nodes are being added. In the case of bare-metal machines with special hardware configuration, like certain GPU type for example, it may take longer to fulfill a cluster scale up request. While cluster scaling is a useful mechanism for relatively longer time-window capacity planning, cluster owners cannot rely on this mechanism to respond to instantaneous demand fluctuations induced by resource hungry AI and ML workloads.

The Evolving Multi-cloud and Multi-cluster Patterns

The typical enterprise today uses services from multiple cloud providers. An organization owning tens of Kubernetes clusters is not uncommon today. Smaller clusters are easier to manage and in the case of failure the blast radius of impacted applications and users is limited. Therefore a sharded multi-cluster architecture appeals to both service providers and operations teams. This creates an additional management decision that needs to be made when running a certain AI workload, as to where to run it; on which cluster should it be placed. A static assignment of users or application sets to clusters is too naïve to efficiently utilize available multi-cluster resources.

The Rise of Hybrid Cloud and Edge Computing

A scenario, which is often cited as an advantage of cloud computing, is the ability to burst from private cloud or private IT infrastructure to public cloud at times of unexpected or seasonal high demands. While with traditional retail applications this seasonality may be well studied and anticipated, on the other hand, with AI and ML workloads in general this need for offloading local resources or bursting to a public cloud may arise more frequently and at any time, as a resource hungry massively parallel job may be started at any moment, necessitating the need to instantaneously burst from private to public cloud in a dynamically managed seamless way.

Advancements in the field of IoT (Internet of Things) and the surge in use of devices and sensors at the edge of the network, has led to the emergence of Edge Computing as a new paradigm. In this paradigm, the compute power is brought near to the sources of data, where initial data analysis and learning steps may take place near the data generation sources, instead of the overhead of having to transfer enormous data streams all the way back to a centralized cloud computing location. In some situations, this data streaming may not be feasible due to lack of availability of fast and reliable network connectivity, and hence the need for Kubernetes clusters at the edge of the network.

The Kubernetes Scheduler Can’t Do Everything

Now that we have covered the landscape of issues, as well as evolving runtime patterns, associated with executing AI workloads in the cloud, we can look at what the Kubernetes scheduler is good at addressing from among these issues and what it is not suited for.

The scheduler is responsible for the placement of PODs (the schedulable unit in Kubernetes; you can think of it as a container potentially together with supporting side-car containers) within a Kubernetes cluster. It is part and parcel of the Kubernetes architecture and it is good for placing PODs on the right worker nodes, within a cluster, while maintaining constraints such as available capacity, resource requests and limits, and affinity to certain nodes. A POD may represent a task in a job, or an executor in which multiple tasks may be executed by the AI/ML framework. Discussing these different execution models for frameworks and the pros and cons of each is beyond the scope of this article.

What the Kubernetes scheduler cannot do is managing jobs holistically. It is obvious in the multi-cluster and hybrid scenarios that a scheduler sitting within a single cluster cannot have the global view to dispatch jobs to multiple clusters. But even in single cluster environments, it lacks the ability to manage jobs, and instead jobs must be broken down, immediately as soon as they are received by the Kubernetes API server, to their constituting PODs and submitted to the scheduler. This may cause a problem of excessive numbers of pending pods, in times of high demand, which may overwhelm the scheduler other controllers, and the underlying etcd persistence layer, which slows down the overall cluster performance significantly.

What is The Solution?

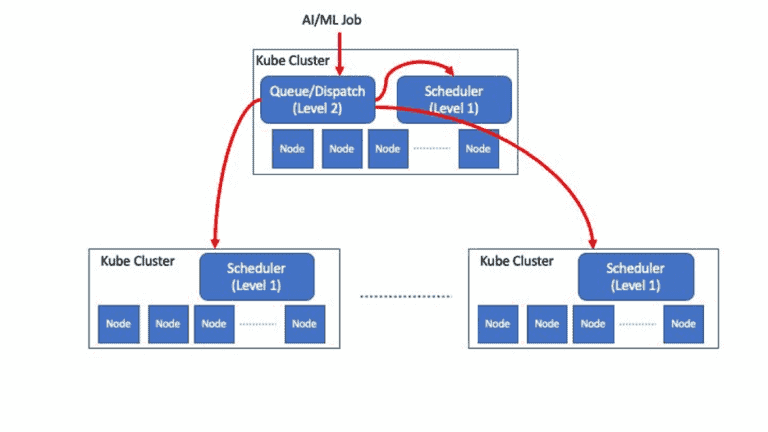

All the above indicates that something is missing in the overall approach to resource management of AI/ML workloads in Kubernetes based environments and suggests that the best way to manage such workloads is to follow a two-level resource management approach that is Kube-native.

Kube-native two-level resource management for AI Workloads.

First, what is Kube-native?

What is meant is that the solution is built as a Kubernetes extension using the extensible frameworks that it offers. Any new resource management component should be realized as a Kubernetes operator (controller). In addition, the API and interaction mechanism exposed to the end users and admins should be a seamless extension of the Kubernetes API. Users and admins should be able to use the standard Kubectl cli, for example, to manage the AI jobs’ life cycle.

Second, what are the two levels of resource management?

The first level is the existing scheduler level. As explained above, a scheduler responsible for the placement of PODs within each Kubernetes cluster is needed for placing PODs, or sometimes groups of PODs together, on the right nodes, while maintaining constraints such as co-location, affinity, co-scheduling (gang scheduling), etc. Your vanilla Kube scheduler does not do all of that, but you can replace it by a scheduler that does all or some of these functions. Several open source Kube schedulers are available, like Volcano, YuniKorn, and Safe Scheduler. Each of these schedulers addresses some of these requirements.

The second level, or the higher-level resource manager is responsible for queuing and dispatching the AI/ML jobs, and enforcement of user quotas. Every job submitted to the system is received and queued for execution by the second level resource manager. It has the responsibility to decide when to release the job to be served, and where that job should be served, i.e., on which cluster. It implements policies that regulate the flow of jobs to the clusters, allocate resources to jobs at coarse granularity level, and realize the SLA differentiation imposed by priorities or classes of service. The important thing to note about it is that it operates on the job level, not the task or POD level, hence the lower lever (first level) schedulers, running in each cluster, are not overwhelmed with pending PODs that should not be executed yet. Also, once a decision is made to execute a job, its corresponding resources, including its PODs, are created only on the selected target cluster, at that moment.

In order to perform its job properly and be able to make these decisions, the second level resource manager needs to tap to the resource monitoring streams on the target clusters. Coupled with the right policy configurations, it is capable of queuing and dispatching jobs properly. Depending on implementation details, the second level manager may require an “agent” on each target cluster to assist it in accomplishing its goals. The agent would receive the dispatched job and create locally its corresponding PODs, in this case, instead of the Dispatcher creating them remotely.

To the contrary of the current state of the art for the first level scheduler where several open as well as proprietary solutions exist, including the Kube default scheduler, not much attention has been paid by the community to the second level resource manager. One open source project — Multi-Cluster App Dispatcher — addresses this important aspect of the solution. Hopefully, we can shed more light on that in future blog posts.

Alaa Youssef manages the Container Cloud Platform team at IBM T.J. Watson Research Center. His research focus is on cloud computing, secure distributed systems, and continuous delivery of large software systems. He has authored and co-authored many technical publications, and holds over a dozen issued patents. Dr. Youssef is a senior architect who has held multiple technical and management positions in IBM Research, and in Services in multiple geographies. He received his PhD in Computer Science from Old Dominion University, Virginia, and his MSc in Computer