Guest post by Rob Richardson (@rob_rich), Technical Evangelist, MemSQL & Kavya Pearlman (@KavyaPearlman), Global Cybersecurity Strategist, Wallarm

We are witnessing the rise of microservices and cloud-native technologies. However, one big challenge of microservice architecture is the overhead of managing network communication between services. Many companies are successfully using tools like Kubernetes for deployment, but they still face runtime challenges with routing, monitoring and security. Having a mess of tens, hundreds or even thousands of services communicating in production is a job only for the brave technical hearts. This is where Service Mesh comes to clean up the mess.

Monolithic to messy microservices

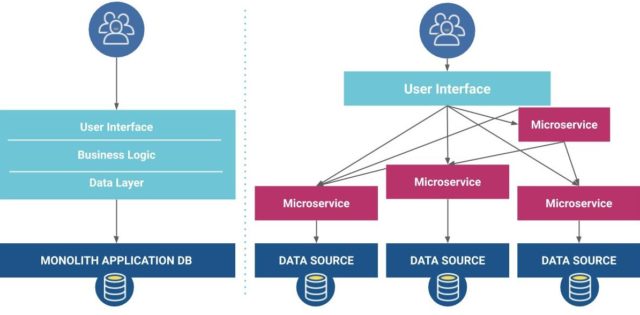

Historically, deployments were hard. To avoid this problem, we packed all the pieces of the software into one large deployment bundle — a monolith, and deployed it infrequently. This single package reduced the deployment burden by minimizing the frequency and quantity of content we needed to deploy. But this huge package was complex. It contained every piece of functionality and all the inter-related pieces. The monolith was fragile, and given the pain of deployment, we needed to carefully care for the production environment.

To combat this problem, we moved to microservices — small, simple pieces of functionality that could be deployed independently and frequently. A microservices architecture involves building small modules that address a specific task or business objective. They are generally simple to build and replace, and generally communicate with other services via web requests or event queues. Microservices are small modular components that assemble into larger systems.

Microservice architecture presents a new challenge: internal components have web addresses. No longer are internal method calls protected by the process boundary, but rather it’s a network request. This creates a really messy network architecture as seen in the diagram. What keeps outside traffic from calling internal components directly? The solution to this mess: a Service Mesh.

What is a Service Mesh

A Service Mesh answers the question, “How do I observe, control, or secure communication between services?” A Service Mesh intercepts traffic going into and out of a container whether between containers or from or to outside resources. Because it intercepts all cluster network traffic, it can monitor and validate connections, mapping out the communication between services. It can also understand service health, intercept failures, or inject chaos.

Be careful not to confuse a Service Mesh with a “mesh of services.” A mesh of services is all the services needed to accomplish the software’s tasks. A Service Mesh is the management layer to monitor and control a collection of microservices. A Service Mesh augments but does not replace the services it controls. As Zach Butcher says, “If it doesn’t have a control plane, it ain’t a Service Mesh.”

Another similar term in this space is an API gateway. Often a Service Mesh wraps each container in a network proxy, adding a side-car container to each microservice. By comparison, an API gateway is a simpler construct. A single addition sits at the edge of the cluster and validates inbound traffic, but doesn’t monitor traffic between the containers.

How does it work?

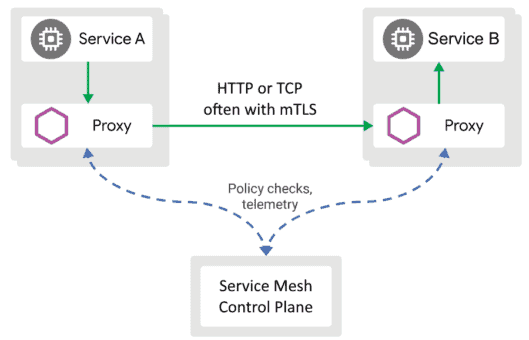

Istio is an open-source Service Mesh. Let’s use it as an example to see how a typical Service Mesh works. At the top of the diagram, we see Service A and Service B. The grey box is the pod boundary, and we see two containers in each pod: the service, and a side-car container. In this case, Istio uses Envoy, an open-source edge and service proxy. At the bottom of the diagram is the Istio control plane. Linkerd is another open-source Service Mesh, and the network architecture is nearly identical. Linkerd uses a different brand of side-car proxy and the control plane has different pieces, but the methodology is the same.

Without a Service Mesh, Service A would call directly to Service B. With a Service Mesh, Service A reaches out to the proxy, an Envoy proxy in this case. The proxy calls the Istio control plane. Istio validates that A is allowed to talk to B. Istio hands back the details needed to communicate to B’s proxy. If configured to communicate securely, A’s proxy connects to B’s proxy through a mutually encrypted TLS tunnel. B’s proxy reaches out to the control plane to validate the connection, then forwards the request to Service B. In this scenario, all the network connections are via TLS with one exception. Communication between the service and the proxy may not be encrypted (depending on the service). This is ok because it’s within the pod’s network boundary. Any traffic moving between pods is now encrypted.

When to choose a Service Mesh

The beauty of intercepting all cluster traffic is that a Service Mesh can do really interesting things to validate and route traffic. In general, we choose a Service Mesh when we’re looking to solve one of these problems:

- Observe traffic in the cluster: discover, map, log

- Control traffic in the cluster: access policies, split traffic between versions

- Secure traffic between network resources: https between containers

The most common reason to opt for a Service Mesh is to secure communication between containers. The Service Mesh adds a side-car proxy container to each pod. It’s now easy to encrypt traffic between the side-car proxies to ensure spying eyes can’t see cluster network traffic. This is a great feature when reaching for security-in-depth.

Another common reason to use a Service Mesh is to ensure only authorized resources can call each service. Because all traffic goes through the side-car proxy, we can validate traffic against approved network policies. For example, only the executive portal container can call into the corporate financial reporting container.

Because the Service Mesh stands between all microservice communication, it’s trivial to log these interactions. From this data, we can view a dashboard of service health and infer a network diagram of how services communicate. This network diagram isn’t what the developer thought would happen but rather what is actually happening. For example we could discover the check out process isn’t calling the tax calculation service but rather using a hard-coded debug value the developer forgot to remove. Or we could discover a new shadow IT dashboard has been installed and is unexpectedly calling into the shipping service. Perhaps we need to adjust access policies or increase service capacity to compensate.

Another great reason to use a Service Mesh is to split traffic between different versions of the same software. We may choose to run an A / B test to experiment with new features and to understand customer engagement and financial impact. Or we may have a beta channel where eager customers can try out new features before they’re released. Or we can use canary deployments by sending only a percentage of the traffic to the new version. As we gain confidence, we can increase the traffic until the old version is unused. In test scenarios, the Service Mesh can inject faults into the traffic allowing us to test service resiliency. In production, the Service Mesh can act as a circuit breaker that helps services recover more easily during failure.

When to not choose a Service Mesh

It’s easy to reach for a Service Mesh too eagerly, not understanding the potential impact on our cluster. Perhaps the software requirements include “secure communication between containers.” Without an appropriate business need, this can make things even messier.

Compare the Service Mesh cluster to a cluster without a Service Mesh. In the regular cluster, we’ll have N number of containers doing work. Add a Service Mesh, and we have the same N containers together with N side-car proxies. In a regular cluster we have the Kubernetes control plane containers. Add a Service Mesh and we have the Service Mesh’s control plane containers. We’ve also added the overhead of TLS encryption. Adding a Service Mesh can double the amount of containers in the cluster. Adding this much additional compute can definitely increase the cost.

Do we need to secure communication between containers? If we’re running untrusted workloads in the cluster, extremely sensitive or business critical workloads, or we’re running a multi-tenant cluster, this is crucial. But if we’re only running first-party workloads, we could instead treat the entire cluster as a clean room and secure the cluster perimeter, saving a lot of hardware spend.

In short, don’t use a Service Mesh if you’re:

– not running highly sensitive services (PKI, PCI)

– not running untrusted workloads

– not running multi-tenant workloads

A look at Istio

Istio is an open-source Service Mesh, very similar to Linkerd. Istio injects a side-car proxy container into each pod so that it can manage communication into and out of the container. The container’s application doesn’t need to change to support Service Mesh network routing.

While the particulars of Istio setup are beyond the scope of this article, the Istio setup is quite straight forward. To install Istio into Kubernetes, first install all the CRDs (Custom Resource Definitions), then start the Istio control plane via the istioctl command-line utility. Finally, add the label istio-injection=enabled to each namespace to be managed. As a result, as pods are scheduled, the Istio proxy side-car is automatically injected. Istio configuration profiles enable and disable portions of the control plane to match your needs for security, customization, and ease of learning. For example, we can turn on TLS for maximum security, or turn off TLS to get observation and control without the additional encryption compute needs.

Istio replaces k8s’s Service with VirtualService, a construct that allows specifying more granular routing rules such as inspecting inbound headers for identity or splitting traffic among multiple targets. (see figure) Istio also replaces k8s’s Ingress with Gateway so even inbound traffic is routed securely between the services. A perfect security-in-depth use case is to terminate HTTPS at the Istio Gateway, and use Istio’s mTLS to ensure all traffic from then on is encrypted.

API Gateway instead of Service Mesh

If we’re running only trusted, first-party workloads in the cluster, we can take an alternate approach using an API gateway such as Kong. The primary assumption of a Service Mesh is we don’t trust the cluster, so we must secure each container. We could instead treat the entire cluster as trusted, and focus on securing the cluster boundary.

To sanitize inbound traffic, we can choose a lighter-weight API gateway or a Web Application Firewall (WAF). To ensure container health, focus on a trusted build chain. Only use base containers from a trusted registry, and use build-time vulnerability scanning as you build each container. During the container build process, capture an audit of all software name and version installed in the container — both OS packages and software libraries. Whenever a new vulnerability is discovered, compare the list of installed software with the vulnerability database. If a container includes any vulnerable packages, rebuild the container, and deploy a new, safe version.

By focusing on the cluster boundary and securing the build pipeline, we can consider the cluster a clean room, and all the contents in the cluster safe. Now we need not secure each running container individually. With this alternate approach, we may choose to build separate Kubernetes clusters for unique business units or risk tolerances, segmenting sensitive workloads in separate clusters from more casual business concerns.

Cleaning up the Mess

There can be downsides to reaching for a Service Mesh too quickly. We must remain cognisant of the costs of additional resource requirements for a Service Mesh. You need a Service Mesh if you have any of these business needs:

– If running highly sensitive services (PKI, PCI)

– If running untrusted workloads

– If running multi-tenant workloads

Reach for a Service Mesh for observing, controlling, or securing traffic in a Kubernetes cluster. Because the Service Mesh intercepts traffic into and out-of each container, it’s a great way to monitor and control traffic. Whether you’re looking to secure this traffic with mutual TLS or authorize inter-service communication or monitor traffic between services, a Service Mesh can be a great choice to clean up the mess.